DeezyMatch can be applied for performing the following tasks:

- Fuzzy string matching

- Record linkage

- Candidate selection for entity linking systems

- Toponym matching

- Installation and setup

- Data and directory structure in tutorials

- Run DeezyMatch as a Python module or via command line

- Examples on how to run DeezyMatch

- Reproduce Fig. 2 of DeezyMatch's paper, EMNLP2020

- How to cite DeezyMatch

- Credits

We strongly recommend installation via Anaconda:

-

Create a new environment for DeezyMatch

conda create -n py37deezy python=3.7- Activate the environment:

conda activate py37deezyDeezyMatch can be installed in different ways:

-

install DeezyMatch via PyPi: which tends to be the most user-friendly option:

pip install DeezyMatch

- We have provided some Jupyter Notebooks to show how different components in DeezyMatch can be run. To allow the newly created

py37deezyenvironment to show up in the notebooks:

python -m ipykernel install --user --name py37deezy --display-name "Python (py37deezy)"- Continue with the Tutorial!

- We have provided some Jupyter Notebooks to show how different components in DeezyMatch can be run. To allow the newly created

-

install DeezyMatch from the source code:

- Clone DeezyMatch source code:

git clone https://github.com/Living-with-machines/DeezyMatch.git

- Install DeezyMatch dependencies:

cd /path/to/my/DeezyMatch pip install -r requirements.txt- Install DeezyMatch:

cd /path/to/my/DeezyMatch python setup.py installAlternatively:

cd /path/to/my/DeezyMatch pip install -v -e .- We have provided some Jupyter Notebooks to show how different components in DeezyMatch can be run. To allow the newly created

py37deezyenvironment to show up in the notebooks:

python -m ipykernel install --user --name py37deezy --display-name "Python (py37deezy)"- Continue with the Tutorial!

ModuleNotFoundError: No module named '_swigfaiss' error when running candidateRanker.py, one way to solve this issue is by:

pip install faiss-cpu --no-cacheRefer to this page.

In the tutorials, we assume the following directory structure:

test_deezy/

├── dataset

│ ├── characters_v001.vocab

│ └── dataset-string-similarity_test.txt

└── inputs

└── input_dfm.yamlFor this, we first create a test directory (:warning: note that this directory can be created outside of the DeezyMarch source code. After installation, DeezyMatch command lines and modules are accessible from anywhere on your local machine.):

mkdir ./test_deezy

cd ./test_deezy

mkdir dataset inputs

# Now, copy characters_v001.vocab, dataset-string-similarity_test.txt and input_dfm.yaml from DeezyMatch repo

# Arrange the files according to the above directory structureThese three files can be downloaded directly from inputs and dataset directories on DeezyMatch repo.

Note on vocabulary: characters_v001.vocab combines all characters from the different datasets we have used in our experiments

(See DeezyMatch's paper and

this paper for a detailed description of the datasets).

It consists of 7,540 characters from multiple alphabets, containing special characters.

dataset-string-similarity_test.txt contains 9995 example rows. The original dataset can be found here: https://github.com/ruipds/Toponym-Matching.

Refer to installation section to set-up DeezyMatch on your local machine.

Written in the Python programming language, DeezyMatch can be used as a stand-alone command-line tool or can be integrated as a module with other Python codes. In what follows, we describe DeezyMatch's functionalities in different examples and by providing both command lines and python modules syntaxes.

In this "quick tour", we go through all the DeezyMatch main functionalities with minimal explanations. Note that, we provide basic examples using the DeezyMatch python modules here. If you want to know more about each module or run DeezyMatch via command line, refer to the relevant part of README (also referenced in this section):

from DeezyMatch import train as dm_train

# train a new model

dm_train(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

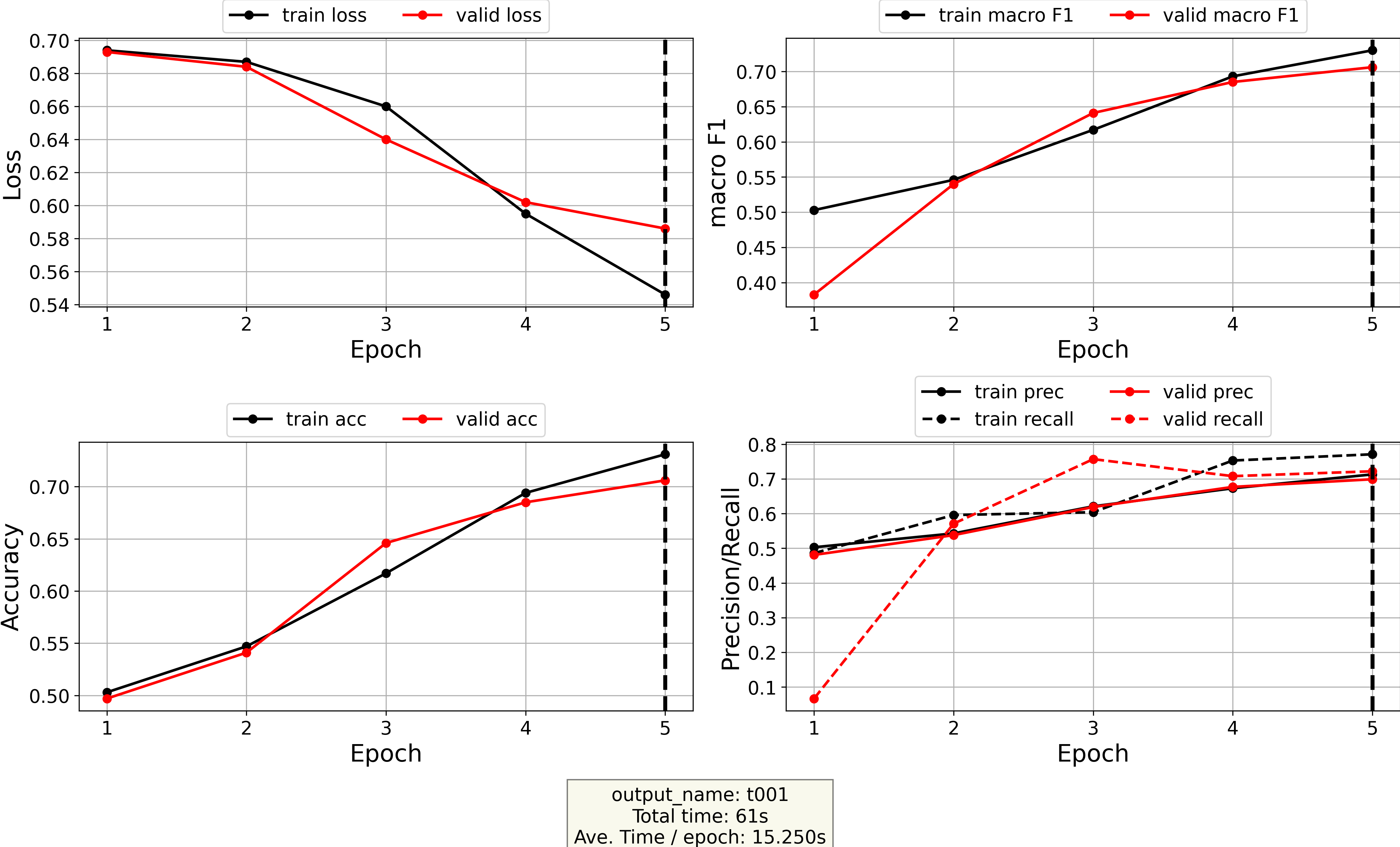

model_name="test001")- Plot the log file (stored at

./models/test001/log.txtand contains loss/accuracy/recall/F1-scores as a function of epoch):

from DeezyMatch import plot_log

# plot log file

plot_log(path2log="./models/test001/log.txt",

output_name="t001")from DeezyMatch import finetune as dm_finetune

# fine-tune a pretrained model stored at pretrained_model_path and pretrained_vocab_path

dm_finetune(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

model_name="finetuned_test001",

pretrained_model_path="./models/test001/test001.model",

pretrained_vocab_path="./models/test001/test001.vocab")from DeezyMatch import inference as dm_inference

# model inference using a model stored at pretrained_model_path and pretrained_vocab_path

dm_inference(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab")from DeezyMatch import inference as dm_inference

# generate vectors for queries (specified in dataset_path)

# using a model stored at pretrained_model_path and pretrained_vocab_path

dm_inference(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

inference_mode="vect",

scenario="queries/test")from DeezyMatch import inference as dm_inference

# generate vectors for candidates (specified in dataset_path)

# using a model stored at pretrained_model_path and pretrained_vocab_path

dm_inference(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

inference_mode="vect",

scenario="candidates/test")from DeezyMatch import combine_vecs

# combine vectors stored in queries/test and save them in combined/queries_test

combine_vecs(rnn_passes=['fwd', 'bwd'],

input_scenario='queries/test',

output_scenario='combined/queries_test',

print_every=10)from DeezyMatch import combine_vecs

# combine vectors stored in candidates/test and save them in combined/candidates_test

combine_vecs(rnn_passes=['fwd', 'bwd'],

input_scenario='candidates/test',

output_scenario='combined/candidates_test',

print_every=10)from DeezyMatch import candidate_ranker

# Select candidates based on L2-norm distance (aka faiss distance):

# find candidates from candidate_scenario

# for queries specified in query_scenario

candidates_pd = \

candidate_ranker(query_scenario="./combined/queries_test",

candidate_scenario="./combined/candidates_test",

ranking_metric="faiss",

selection_threshold=5.,

num_candidates=2,

search_size=2,

output_path="ranker_results/test_candidates_deezymatch",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)from DeezyMatch import candidate_ranker

# Ranking on-the-fly

# find candidates from candidate_scenario

# for queries specified by the `query` argument

candidates_pd = \

candidate_ranker(candidate_scenario="./combined/candidates_test",

query=["DeezyMatch", "kasra", "fede", "mariona"],

ranking_metric="faiss",

selection_threshold=5.,

num_candidates=1,

search_size=100,

output_path="ranker_results/test_candidates_deezymatch_on_the_fly",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)The candidate ranker can be initialised, to be used multiple times, by running:

from DeezyMatch import candidate_ranker_init

# initializing candidate_ranker via candidate_ranker_init

myranker = candidate_ranker_init(candidate_scenario="./combined/candidates_test",

query=["DeezyMatch", "kasra", "fede", "mariona"],

ranking_metric="faiss",

selection_threshold=5.,

num_candidates=1,

search_size=100,

output_path="ranker_results/test_candidates_deezymatch_on_the_fly",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)

# print the content of myranker by:

print(myranker)

# To rank the queries:

myranker.rank()

#The results are stored in:

myranker.outputDeezyMatch train module can be used to train a new model:

from DeezyMatch import train as dm_train

# train a new model

dm_train(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

model_name="test001")The same model can be trained via command line:

DeezyMatch -i ./inputs/input_dfm.yaml -d dataset/dataset-string-similarity_test.txt -m test001Summary of the arguments/flags:

| Func. argument | Command-line flag | Description |

|---|---|---|

| input_file_path | -i | path to the input file |

| dataset_path | -d | path to the dataset |

| model_name | -m | name of the new model |

| n_train_examples | -n | number of training examples to be used (optional) |

A new model directory called test001 will be created in models directory (as specified in the input file, see models_dir in ./inputs/input_dfm.yaml).

dataset/dataset-string-similarity_test.txt in the above command)

- Currently, the third column (label column) should be one of (case-insensitive): ["true", "false", "1", "0"]

- Delimiter is fixed to

\tfor now.

DeezyMatch keeps track of some evaluation metrics (e.g., loss/accuracy/precision/recall/F1) at each epoch. It is possible to plot the log-file by:

from DeezyMatch import plot_log

# plot log file

plot_log(path2log="./models/test001/log.txt",

output_name="t001")or:

DeezyMatch -lp ./models/test001/log.txt -lo t001Summary of the arguments/flags:

| Func. argument | Command-line flag | Description |

|---|---|---|

| path2log | -lp | path to the log file |

| output_name | -lo | output name |

This command generates a figure log_t001.png and stores it in models/test001 directory.

DeezyMatch stores models, vocabularies, input file, log file and checkpoints (for each epoch)

in the following directory structure (unless validation option in the input file is not equal to 1).

When DeezyMatch finishes the last epoch, it will save the model with least validation loss as well (test001.model in the following directory structure).

Moreover, DeezyMatch has an early stopping functionality.

This can be activated by setting the early_stopping_patience option in the input file.

This option specifies the number of epochs with no improvement after which training will be stopped and the model with the least validation loss will be saved.

models/

└── test001

├── checkpoint00001.model

├── checkpoint00001.model_state_dict

├── checkpoint00002.model

├── checkpoint00002.model_state_dict

├── checkpoint00003.model

├── checkpoint00003.model_state_dict

├── checkpoint00004.model

├── checkpoint00004.model_state_dict

├── checkpoint00005.model

├── checkpoint00005.model_state_dict

├── input_dfm.yaml

├── log_t001.png

├── log.txt

├── test001.model

├── test001.model_state_dict

└── test001.vocabfinetune module can be used to fine-tune a pretrained model:

from DeezyMatch import finetune as dm_finetune

# fine-tune a pretrained model stored at pretrained_model_path and pretrained_vocab_path

dm_finetune(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

model_name="finetuned_test001",

pretrained_model_path="./models/test001/test001.model",

pretrained_vocab_path="./models/test001/test001.vocab")dataset_path specifies the dataset to be used for finetuning. For this example, we use the same dataset as in training; normally, other datasets are used to finetune a model. The paths to model and vocabulary of the pretrained model are specified in pretrained_model_path and pretrained_vocab_path, respectively.

It is also possible to fine-tune a model on a specified number of examples/rows from dataset_path (see the n_train_examples argument):

from DeezyMatch import finetune as dm_finetune

# fine-tune a pretrained model stored at pretrained_model_path and pretrained_vocab_path

dm_finetune(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

model_name="finetuned_test001",

pretrained_model_path="./models/test001/test001.model",

pretrained_vocab_path="./models/test001/test001.vocab",

n_train_examples=100)The same can be done via command line:

DeezyMatch -i ./inputs/input_dfm.yaml -d dataset/dataset-string-similarity_test.txt -m finetuned_test001 -f ./models/test001/test001.model -v ./models/test001/test001.vocab -n 100 Summary of the arguments/flags:

| Func. argument | Command-line flag | Description |

|---|---|---|

| input_file_path | -i | path to the input file |

| dataset_path | -d | path to the dataset |

| model_name | -m | name of the new, fine-tuned model |

| pretrained_model_path | -f | path to the pretrained model |

| pretrained_vocab_path | -v | path to the pretrained vocabulary |

| --- | -pm | print all parameters in a model |

| n_train_examples | -n | number of training examples to be used (optional) |

-n flag (or n_train_examples argument) is not specified, the train/valid/test proportions are read from the input file.

A new fine-tuned model called finetuned_test001 will be stored in models directory. In this example, two components in the neural network architecture were frozen, that is, not changed during fine-tuning (see layers_to_freeze in the input file). When running the above command, DeezyMatch lists the parameters in the model and whether or not they will be used in finetuning:

============================================================

List all parameters in the model

============================================================

emb.weight False

rnn_1.weight_ih_l0 False

rnn_1.weight_hh_l0 False

rnn_1.bias_ih_l0 False

rnn_1.bias_hh_l0 False

rnn_1.weight_ih_l0_reverse False

rnn_1.weight_hh_l0_reverse False

rnn_1.bias_ih_l0_reverse False

rnn_1.bias_hh_l0_reverse False

rnn_1.weight_ih_l1 False

rnn_1.weight_hh_l1 False

rnn_1.bias_ih_l1 False

rnn_1.bias_hh_l1 False

rnn_1.weight_ih_l1_reverse False

rnn_1.weight_hh_l1_reverse False

rnn_1.bias_ih_l1_reverse False

rnn_1.bias_hh_l1_reverse False

attn_step1.weight False

attn_step1.bias False

attn_step2.weight False

attn_step2.bias False

fc1.weight True

fc1.bias True

fc2.weight True

fc2.bias True

============================================================

The first column lists the parameters in the model, and the second column specifies if those parameters will be used in the optimization or not.

In our example, we set layers_to_freeze: ["emb", "rnn_1", "attn"], so all the parameters except for fc1 and fc2 will not be changed during the training.

It is possible to print all parameters in a model by:

DeezyMatch -pm models/finetuned_test001/finetuned_test001.modelwhich generates similar output as above.

When a model is trained/fine-tuned, inference module can be used for predictions/model-inference. Again, we use the same dataset (dataset/dataset-string-similarity_test.txt) as before in this example. The paths to model and vocabulary of the pretrained model are specified in pretrained_model_path and pretrained_vocab_path, respectively.

from DeezyMatch import inference as dm_inference

# model inference using a model stored at pretrained_model_path and pretrained_vocab_path

dm_inference(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab")Similarly via command line:

DeezyMatch --deezy_mode inference -i ./inputs/input_dfm.yaml -d dataset/dataset-string-similarity_test.txt -m ./models/finetuned_test001/finetuned_test001.model -v ./models/finetuned_test001/finetuned_test001.vocab -mode testSummary of the arguments/flags:

| Func. argument | Command-line flag | Description |

|---|---|---|

| input_file_path | -i | path to the input file |

| dataset_path | -d | path to the dataset |

| pretrained_model_path | -m | path to the pretrained model |

| pretrained_vocab_path | -v | path to the pretrained vocabulary |

| inference_mode | -mode | two options: test (inference, default), vect (generate vector representations) |

| scenario | -sc, --scenario | name of the experiment top-directory (only for inference_mode='vect') |

| cutoff | -n | number of examples to be used (optional) |

The inference component creates a file: models/finetuned_test001/pred_results_dataset-string-similarity_test.txt in which:

# s1 s2 prediction p0 p1 label

la dom nxy ลำโดมน้อย 1 0.3938 0.6062 1

Krutoy Крутой 1 0.1193 0.8807 1

Sharunyata Shartjugskij 0 0.9560 0.0440 0

同心村 tong xin cun 1 0.1710 0.8290 1

Engeskjæran Abrahamskjeret 0 0.8603 0.1397 0

Qermez Khalifeh-ye `Olya Qermez Khalīfeh-ye ‘Olyā 1 0.1599 0.8401 1

კირენია Κυρά 0 0.8704 0.1296 0

Ozero Pogoreloe Ozero Pogoreloye 1 0.2796 0.7204 1

Anfijld ਮਾਕਰੋਨ ਸਟੇਡੀਅਮ 0 0.5527 0.4473 0

Qanât el-Manzala El-Manzala Canal 1 0.2040 0.7960 1

唐家湾 zhang jia wan zi 1 0.4748 0.5252 0

Ozernoje Ozernoye 1 0.1916 0.8084 1

小罗坝 shi yuan qiang 0 0.6955 0.3045 0

Wādī Qānī Uàdi Gani 1 0.3430 0.6570 1p0 and p1 are probabilities assigned to labels 0 and 1, respectively.

For example, in the second row, the actual label is 1 (last column),

the predicted label is 1 (third column), and the model confidence on the predicted label is p1=0.8807.

In these examples, DeezyMatch correctly predicts the label in all rows except for

唐家湾 zhang jia wan zi (with p0=0.4748 and p1=0.5252).

inference module can also be used to generate vector representations for a set of strings in a dataset. This is a required step for alias selection and candidate ranking (which we will talk about later). We first create vector representations for query mentions (we assume the query mentions are stored in dataset/dataset-string-similarity_test.txt):

from DeezyMatch import inference as dm_inference

# generate vectors for queries (specified in dataset_path)

# using a model stored at pretrained_model_path and pretrained_vocab_path

dm_inference(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

inference_mode="vect",

scenario="queries/test")Compared to the previous section, here we have two additional arguments:

inference_mode="vect": generate vector representations for the first column indataset_path.scenario: directory to store the vectors.

The same can be done via command line:

DeezyMatch --deezy_mode inference -i ./inputs/input_dfm.yaml -d dataset/dataset-string-similarity_test.txt -m ./models/finetuned_test001/finetuned_test001.model -v ./models/finetuned_test001/finetuned_test001.vocab -mode vect --scenario queries/testFor summary of the arguments/flags, refer to the table in model inference.

The resulting directory structure is:

queries/

└── test

├── dataframe.df

├── embeddings

│ ├── rnn_fwd_0

│ ├── rnn_fwd_1

│ ├── rnn_fwd_2

│ ├── rnn_fwd_3

│ ├── rnn_fwd_4

│ ├── rnn_fwd_5

│ ├── rnn_fwd_6

│ ├── rnn_fwd_7

│ ├── rnn_fwd_8

│ ├── rnn_fwd_9

│ └── ...

├── input_dfm.yaml

└── log.txt

(embeddings dir contains all the vector representations).

We repeat this step for candidates (again, we use the same dataset):

from DeezyMatch import inference as dm_inference

# generate vectors for candidates (specified in dataset_path)

# using a model stored at pretrained_model_path and pretrained_vocab_path

dm_inference(input_file_path="./inputs/input_dfm.yaml",

dataset_path="dataset/dataset-string-similarity_test.txt",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

inference_mode="vect",

scenario="candidates/test")or via command line:

DeezyMatch --deezy_mode inference -i ./inputs/input_dfm.yaml -d dataset/dataset-string-similarity_test.txt -m ./models/finetuned_test001/finetuned_test001.model -v ./models/finetuned_test001/finetuned_test001.vocab -mode vect --scenario candidates/testFor summary of the arguments/flags, refer to the table in model inference.

The resulting directory structure is:

candidates/

└── test

├── dataframe.df

├── embeddings

│ ├── rnn_fwd_0

│ ├── rnn_fwd_1

│ ├── rnn_fwd_2

│ ├── rnn_fwd_3

│ ├── rnn_fwd_4

│ ├── rnn_fwd_5

│ ├── rnn_fwd_6

│ ├── rnn_fwd_7

│ ├── rnn_fwd_8

│ ├── rnn_fwd_9

│ └── ...

├── input_dfm.yaml

└── log.txt

Before using the candidate_ranker module of DeezyMatch, we need to:

- Generate vector representations for both query and candidate mentions

- Combine vector representations

Step 1 is already discussed in detail in the previous section: Generate query and candidate vectors.

This step is required if query or candidate vector representations are stored on several files (normally the case!). combine_vecs module assembles those vectors and store the results in output_scenario (see function below). For query vectors:

from DeezyMatch import combine_vecs

# combine vectors stored in queries/test and save them in combined/queries_test

combine_vecs(rnn_passes=['fwd', 'bwd'],

input_scenario='queries/test',

output_scenario='combined/queries_test',

print_every=10)Similarly, for candidate vectors:

from DeezyMatch import combine_vecs

# combine vectors stored in candidates/test and save them in combined/candidates_test

combine_vecs(rnn_passes=['fwd', 'bwd'],

input_scenario='candidates/test',

output_scenario='combined/candidates_test',

print_every=10)Here, rnn_passes specifies that combine_vecs should assemble all vectors generated in the forward and backward RNN/GRU/LSTM passes

(which are stored in the input_scenario directory).

NOTE: we have a backward pass only if bidirectional is set to True in the input file.

The results (for both query and candidate vectors) are stored in the output_scenario as follows:

combined/

├── candidates_test

│ ├── bwd_id.pt

│ ├── bwd_items.npy

│ ├── bwd.pt

│ ├── fwd_id.pt

│ ├── fwd_items.npy

│ ├── fwd.pt

│ └── input_dfm.yaml

└── queries_test

├── bwd_id.pt

├── bwd_items.npy

├── bwd.pt

├── fwd_id.pt

├── fwd_items.npy

├── fwd.pt

└── input_dfm.yamlThe above steps can be done via command line, for query vectors:

DeezyMatch --deezy_mode combine_vecs -p fwd,bwd -sc queries/test -combs combined/queries_testFor candidate vectors:

DeezyMatch --deezy_mode combine_vecs -p fwd,bwd -sc candidates/test -combs combined/candidates_testSummary of the arguments/flags:

| Func. argument | Command-line flag | Description |

|---|---|---|

| rnn_passes | -p | RNN/GRU/LSTM passes to be used in assembling vectors (fwd or bwd) |

| input_scenario | -sc | name of the input top-directory |

| output_scenario | -combs | name of the output top-directory |

| input_file_path | -i | path to the input file. "default": read input file in input_scenario |

| print_every | --- | interval to print the progress in assembling vectors |

| sel_device | --- | set the device (cpu, cuda, cuda:0, cuda:1, ...). if "default", the device will be read from the input file. |

| save_df | --- | save strings of the first column in queries/candidates files (default: True) |

Candidate ranker uses the vector representations, generated and assembled in the previous sections,

to find a set of candidates (from a dataset or knowledge base) for given queries in the same or another dataset.

In the following example, for queries stored in query_scenario,

we want to find 2 candidates (specified by num_candidates) from a dataset stored in candidate_scenario.

from DeezyMatch import candidate_ranker

# Select candidates based on L2-norm distance (aka faiss distance):

# find candidates from candidate_scenario

# for queries specified in query_scenario

candidates_pd = \

candidate_ranker(query_scenario="./combined/queries_test",

candidate_scenario="./combined/candidates_test",

ranking_metric="faiss",

selection_threshold=5.,

num_candidates=2,

search_size=2,

output_path="ranker_results/test_candidates_deezymatch",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)query_scenario is the directory that contains all the assembled query vectors (see) while candidate_scenario contains the assembled candidate vectors.

ranking_metric: choices are faiss (used here, L2-norm distance), cosine (cosine distance), conf (confidence as measured by DeezyMatch prediction outputs).

conf was not a good metric to rank candidates. Consider using faiss or cosine instead.

selection_threshold: changes according to the ranking_metric:

A candidate will be selected if:

faiss-distance <= selection_threshold

cosine-distance <= selection_threshold

prediction-confidence >= selection_threshold

conf (i.e., prediction-confidence),

the threshold corresponds to the minimum accepted value,

while in faiss and cosine metrics, the threshold is the maximum accepted value.

cosine and conf scores are between [0, 1] while faiss distance can take any values from [0, +∞).

search_size: for a given query, DeezyMatch searches for candidates iteratively. At each iteration, the selected ranking metric between a query and candidates (with the size of search_size) are computed, and if the number of desired candidates (specified by num_candidates) is not reached, a new batch of candidates with the size of search_size is tested in the next iteration. This continues until candidates with the size of num_candidates are found or all the candidates are tested.

If the role of search_size argument is not clear, refer to Tips / Suggestions on DeezyMatch functionalities.

The DeezyMatch model and its vocabulary are specified by pretrained_model_path and pretrained_vocab_path, respectively.

number_test_rows: only for testing. It specifies the number of queries to be used for testing.

The results can be accessed directly from candidates_pd variable (see the above command). Also, they are saved in output_path which is in a pandas dataframe fromat. The first few rows are:

query pred_score 1-pred_score faiss_distance cosine_dist candidate_original_ids query_original_id num_all_searches

id

0 la dom nxy {'la dom nxy': 0.6498, 'Laowuxia': 0.5418} {'la dom nxy': 0.35019999999999996, 'Laowuxia'... {'la dom nxy': 0.0, 'Laowuxia': 3.3053} {'la dom nxy': 0.0, 'Laowuxia': 0.159} {'la dom nxy': 0, 'Laowuxia': 9624} 0 2

1 Krutoy {'Krutoy': 0.7946, 'wrkt': 0.8277} {'Krutoy': 0.20540000000000003, 'wrkt': 0.1723} {'Krutoy': 0.0, 'wrkt': 2.2673} {'Krutoy': -0.0, 'wrkt': 0.0599} {'Krutoy': 1, 'wrkt': 7955} 1 2

2 Sharunyata {'Sharunyata': 0.7379, 'Shēlah-ye Nasar-e Jari... {'Sharunyata': 0.2621, 'Shēlah-ye Nasar-e Jari... {'Sharunyata': 0.0, 'Shēlah-ye Nasar-e Jaritā'... {'Sharunyata': 0.0, 'Shēlah-ye Nasar-e Jaritā'... {'Sharunyata': 2, 'Shēlah-ye Nasar-e Jaritā': ... 2 2

3 Sutangcun {'Sutangcun': 0.5301, 'Seohongcheon': 0.5691} {'Sutangcun': 0.4699, 'Seohongcheon': 0.430899... {'Sutangcun': 0.0, 'Seohongcheon': 2.7927} {'Sutangcun': 0.0, 'Seohongcheon': 0.1545} {'Sutangcun': 3, 'Seohongcheon': 2184} 3 2

4 Jowkār-e Shafī‘ {'Jowkār-e Shafī‘': 0.6177, 'wad thung kha phi... {'Jowkār-e Shafī‘': 0.3823, 'wad thung kha phi... {'Jowkār-e Shafī‘': 0.0, 'wad thung kha phi': ... {'Jowkār-e Shafī‘': 0.0, 'wad thung kha phi': ... {'Jowkār-e Shafī‘': 4, 'wad thung kha phi': 9489} 4 2

5 rongreiyn ban hwy h wk cxmthxng {'rongreiyn ban hwy h wk cxmthxng': 0.7189, 'r... {'rongreiyn ban hwy h wk cxmthxng': 0.2811, 'r... {'rongreiyn ban hwy h wk cxmthxng': 0.0, 'rong... {'rongreiyn ban hwy h wk cxmthxng': 0.0, 'rong... {'rongreiyn ban hwy h wk cxmthxng': 5, 'rongre... 5 2As expected, candidate mentions (in pred_score, faiss_distance, cosine_dist and candidate_original_ids) are the same as the queries (second column),

as we have used one dataset for both queries and candidates.

Similarly, the above results can be generated via command line:

DeezyMatch --deezy_mode candidate_ranker -qs ./combined/queries_test -cs ./combined/candidates_test -rm faiss -t 5 -n 2 -sz 2 -o ranker_results/test_candidates_deezymatch -mp ./models/finetuned_test001/finetuned_test001.model -v ./models/finetuned_test001/finetuned_test001.vocab -tn 20Summary of the arguments/flags:

| Func. argument | Command-line flag | Description |

|---|---|---|

| query_scenario | -qs | directory that contains all the assembled query vectors |

| candidate_scenario | -cs | directory that contains all the assembled candidate vectors |

| ranking_metric | -rm | choices arefaiss (used here, L2-norm distance),cosine (cosine distance),conf (confidence as measured by DeezyMatch prediction outputs) |

| selection_threshold | -t | changes according to the ranking_metric, a candidate will be selected if:faiss-distance <= selection_threshold, cosine-distance <= selection_threshold, prediction-confidence >= selection_threshold |

| query | -q | one string or a list of strings to be used in candidate ranking on-the-fly |

| num_candidates | -n | number of desired candidates |

| search_size | -sz | number of candidates to be tested at each iteration |

| output_path | -o | path to the output file |

| pretrained_model_path | -mp | path to the pretrained model |

| pretrained_vocab_path | -v | path to the pretrained vocabulary |

| input_file_path | -i | path to the input file. "default": read input file in candidate_scenario |

| number_test_rows | -tn | number of examples to be used (optional, normally for testing) |

Other methods

- Select candidates based on cosine distance:

from DeezyMatch import candidate_ranker

# Select candidates based on cosine distance:

# find candidates from candidate_scenario

# for queries specified in query_scenario

candidates_pd = \

candidate_ranker(query_scenario="./combined/queries_test",

candidate_scenario="./combined/candidates_test",

ranking_metric="cosine",

selection_threshold=0.49,

num_candidates=2,

search_size=2,

output_path="ranker_results/test_candidates_deezymatch",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)Note that the only differences compared to the previous command are ranking_metric="cosine" and selection_threshold=0.49.

For a list of input strings (specified in query argument), DeezyMatch can rank candidates (stored in candidate_scenario) on-the-fly. Here, DeezyMatch generates and assembles the vector representations of strings in query on-the-fly.

from DeezyMatch import candidate_ranker

# Ranking on-the-fly

# find candidates from candidate_scenario

# for queries specified by the `query` argument

candidates_pd = \

candidate_ranker(candidate_scenario="./combined/candidates_test",

query=["DeezyMatch", "kasra", "fede", "mariona"],

ranking_metric="faiss",

selection_threshold=5.,

num_candidates=1,

search_size=100,

output_path="ranker_results/test_candidates_deezymatch_on_the_fly",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)The candidate ranker can be initialised, to be used multiple times, by running:

from DeezyMatch import candidate_ranker_init

# initializing candidate_ranker via candidate_ranker_init

myranker = candidate_ranker_init(candidate_scenario="./combined/candidates_test",

query=["DeezyMatch", "kasra", "fede", "mariona"],

ranking_metric="faiss",

selection_threshold=5.,

num_candidates=1,

search_size=100,

output_path="ranker_results/test_candidates_deezymatch_on_the_fly",

pretrained_model_path="./models/finetuned_test001/finetuned_test001.model",

pretrained_vocab_path="./models/finetuned_test001/finetuned_test001.vocab",

number_test_rows=20)The content of myranker can be printed by:

print(myranker)which results in:

-------------------------

* Candidate ranker params

-------------------------

Queries are based on the following list:

['DeezyMatch', 'kasra', 'fede', 'mariona']

candidate_scenario: ./combined/candidates_test

---Searching params---

num_candidates: 1

ranking_metric: faiss

selection_threshold: 5.0

search_size: 100

number_test_rows: 20

---I/O---

input_file_path: default (path: ./combined/candidates_test/input_dfm.yaml)

output_path: ranker_results/test_candidates_deezymatch_on_the_fly

pretrained_model_path: ./models/finetuned_test001/finetuned_test001.model

pretrained_vocab_path: ./models/finetuned_test001/finetuned_test001.vocabTo rank the queries:

myranker.rank()The results are stored in:

myranker.outputAll the query-related parameters can be changed via set_query method, for example:

myranker.set_query(query=["another_example"])other parameters include:

query

query_scenario

ranking_metric

selection_threshold

num_candidates

search_size

number_test_rows

output_pathAgain, we can rank the candidates for the new query by:

myranker.rank()

# to access output:

myranker.output-

As already mentioned, based on our experiments,

confis not a good metric for ranking candidates. Consider usingfaissorcosine. -

Adding prefix/suffix to input strings (see

prefix_suffixoption in the input file) can greatly enhance the ranking results. However, we recommend one-character-long prefix/suffix; otherwise, this may affect the computation time. -

In

candidate_ranker, the user specifies aranking_metricbased on which the candidates are selected and ranked. However, DeezyMatch also reports the values of other metrics for those candidates. For example, if the user selectsranking_metric="faiss", the candidates are selected based on thefaiss-distance metric. At the same time, the values ofcosineandconfmetrics for those candidates (ranked according to the selected metric, in this case faiss) are also reported. -

What is the role of

search_sizein candidate ranker?

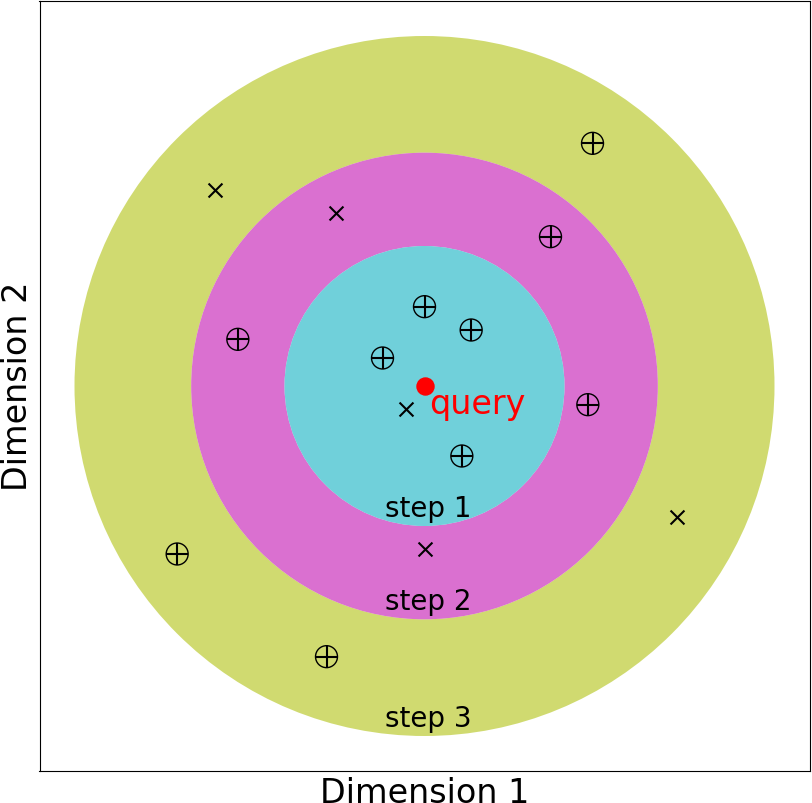

For a given query, DeezyMatch searches for candidates iteratively. If we set search_size to five, at each iteration (i.e., one colored region in the figure below), the query vector is compared with five potential candidate vectors according to the selected ranking metric (ranking_metric argument). In step-1, the five closest candidate vectors, as measured by L2-norm distance, are examined. Four (out of five) candidate vectors passed the threshold (specified by selection_threshold argument) in the figure (step-1). However, in this example, we assume num_candidates is 10. So, DeezyMatch examines the second batch of potential candidates, again five vectors (as specified by search_size). Three (out of five) candidates pass the threshold in step-2. Finally, in the third iteration, three more candidates are found. DeezyMatch collects the information of these ten candidates and go to the next query.

This adaptive search algorithm significantly reduces the computation time to find and rank a set of candidates in a (large) dataset. Instead of searching the whole dataset, DeezyMatch iteratively compares a query vector with the "most-promising" candidates.

In most use cases, search_size can be set >= num_candidates.

However, if num_candidates is very large, it is better to set the search_size to a lower value.

Let's clarify this in an example. First, assume num_candidates=4 (number of desired candidates is 4 for each query). If we set the search_size to values less than 4, let's say, 2. DeezyMatch needs to do at least two iterations. In the first iteration, it looks at the closest 2 candidate vectors (as search_size is 2). In the second iteration, candidate vectors 3 and 4 will be examined. So two iterations. Another choice is search_size=4. Here, DeezyMatch looks at 4 candidates in the first iteration, if they pass the threshold, the process concludes. If not, it will seach candidates 5-8 in the next iteration. Now, let's assume num_candidates=1001 (i.e., number of desired candidates is 1001 for each query). If we set the search_size=1000, DeezyMatch has to search at least 2000 candidates (2 x 1000 search_size). If we set search_size=100, this time, DeezyMatch has to search at least 1100 candidates (11 x 100 search_size). So 900 vectors less. In the end, it is a trade-off between iterations and search_size.

Please consider acknowledging DeezyMatch if it helps you to obtain results and figures for publications or presentations, by citing:

ACL link: https://www.aclweb.org/anthology/2020.emnlp-demos.9/

Kasra Hosseini, Federico Nanni, and Mariona Coll Ardanuy (2020), DeezyMatch: A Flexible Deep Learning Approach to Fuzzy String Matching, In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, pages 62–69. Association for Computational Linguistics.

and in BibTeX:

@inproceedings{hosseini-etal-2020-deezymatch,

title = "{D}eezy{M}atch: A Flexible Deep Learning Approach to Fuzzy String Matching",

author = "Hosseini, Kasra and

Nanni, Federico and

Coll Ardanuy, Mariona",

booktitle = "Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations",

month = oct,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.emnlp-demos.9",

pages = "62--69",

abstract = "We present DeezyMatch, a free, open-source software library written in Python for fuzzy string matching and candidate ranking. Its pair classifier supports various deep neural network architectures for training new classifiers and for fine-tuning a pretrained model, which paves the way for transfer learning in fuzzy string matching. This approach is especially useful where only limited training examples are available. The learned DeezyMatch models can be used to generate rich vector representations from string inputs. The candidate ranker component in DeezyMatch uses these vector representations to find, for a given query, the best matching candidates in a knowledge base. It uses an adaptive searching algorithm applicable to large knowledge bases and query sets. We describe DeezyMatch{'}s functionality, design and implementation, accompanied by a use case in toponym matching and candidate ranking in realistic noisy datasets.",

}The results presented in this paper were generated by DeezyMatch v1.2.0 (Released: Sep 15, 2020). You can reproduce Fig. 2 of DeezyMatch's paper, EMNLP2020, here.

This project extensively uses the ideas/neural-network-architecture published in https://github.com/ruipds/Toponym-Matching.

This work was supported by Living with Machines (AHRC grant AH/S01179X/1) and The Alan Turing Institute (EPSRC grant EP/ N510129/1).