diff --git a/tutorials/backend/hasura-v3-ts-connector/.gitignore b/tutorials/backend/hasura-v3-ts-connector/.gitignore

deleted file mode 100644

index 723ef36f4..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/.gitignore

+++ /dev/null

@@ -1 +0,0 @@

-.idea

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/.gitignore b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/.gitignore

deleted file mode 100755

index 577571c44..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/.gitignore

+++ /dev/null

@@ -1,7 +0,0 @@

-public

-.cache

-node_modules

-*DS_Store

-*.env

-

-.idea/

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/Dockerfile b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/Dockerfile

deleted file mode 100644

index db96b6b7e..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/Dockerfile

+++ /dev/null

@@ -1,18 +0,0 @@

-FROM gcr.io/websitecloud-352908/github.com/hasura/gatsby-gitbook-starter:974b679a29e3bfd1d8905f7f9a29cb6ecf2456e6

-

-# Create app directory

-WORKDIR /app

-

-RUN cd /app

-

-# Remove already existing dummy content

-RUN rm -R content

-

-# Bundle app source

-COPY . .

-

-# Build static files

-RUN GATSBY_ALGOLIA_APP_ID=WCBB1VVLRC GATSBY_ALGOLIA_SEARCH_KEY=baf43aa3921858bc144d0fe5ce85b526 npm run build

-

-# serve dist folder on port 8080

-CMD ["gatsby", "serve", "--verbose", "--prefix-paths", "-p", "8080", "--host", "0.0.0.0"]

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/Dockerfile.localdev b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/Dockerfile.localdev

deleted file mode 100644

index b9551ebe4..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/Dockerfile.localdev

+++ /dev/null

@@ -1,28 +0,0 @@

-FROM --platform=linux/amd64 node:14.16.0

-

-# update this line when gatsby-gitbook-starter repo changes

-RUN sh -c 'echo -e "Updated at: 2021-09-03 16:00:00 IST"'

-

-# Install global dependencies

-RUN npm -g install gatsby-cli@4.24.0

-

-# clone gatsby-gitbook-starter

-RUN git clone https://github.com/hasura/gatsby-gitbook-starter.git

-RUN cd gatsby-gitbook-starter && git checkout gitbook-theme-hasura

-

-# Create app directory

-WORKDIR /gatsby-gitbook-starter

-

-RUN cd /gatsby-gitbook-starter

-

-# Install dependencies

-RUN npm ci

-

-# Remove already existing dummy content

-RUN rm -R content

-

-# Bundle app source

-COPY . .

-

-# serve on port 8080

-CMD ["gatsby", "develop", "--verbose", "-p", "8080", "--host", "0.0.0.0"]

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/README.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/README.md

deleted file mode 100644

index 628e64aaf..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/README.md

+++ /dev/null

@@ -1,18 +0,0 @@

-## Development

-

-#### Build Docker Image

-```bash

-docker build -t hasura-v3-ts-connector -f Dockerfile.localdev .

-```

-

-#### Run Container in Dev Mode

-

-```bash

-docker run -ti -p 8080:8080 -v /path/to/content:/gatsby-gitbook-starter/content -v /path/to/config.js:/gatsby-gitbook-starter/config.js hasura-v3-ts-connector

-```

-

-Two volumes are mounted. One for `content` and one for `config.js`. This is required for hot-reloading.

-

-Restart docker container

-- In case there are new files

-- Gatsby cache needs to be updated

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/config.js b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/config.js

deleted file mode 100644

index c994a7aec..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/config.js

+++ /dev/null

@@ -1,78 +0,0 @@

-const config = {

- gatsby: {

- pathPrefix: '/learn/graphql/hasura-v3-ts-connector',

- siteUrl: 'https://hasura.io',

- gaTrackingId: 'GTM-WBBW2LN',

- trailingSlash: true,

- },

- header: {

- logo: 'https://graphql-engine-cdn.hasura.io/img/hasura_icon_black.svg',

- logoLink: 'https://hasura.io/learn/',

- title:

- "learn hasura",

- githubUrl: 'https://github.com/hasura/learn-graphql',

- helpUrl: 'https://discord.com/invite/hasura',

- tweetText:

- 'Check out this course in building TypeScript data connectors for Hasura DDN by @HasuraHQ https://hasura.io/learn/graphql/react-apollo-components/introduction/',

- links: [

- {

- text: '',

- link: '',

- },

- ],

- search: {

- enabled: true,

- indexName: 'learn-hasura-backend',

- algoliaAppId: process.env.GATSBY_ALGOLIA_APP_ID,

- algoliaSearchKey: process.env.GATSBY_ALGOLIA_SEARCH_KEY,

- algoliaAdminKey: process.env.ALGOLIA_ADMIN_KEY,

- },

- },

- sidebar: {

- forcedNavOrder: ['/introduction/', '/get_started/', '/predicates/', '/ordering/', '/aggregates/', '/conclusion/'],

- links: [

- {

- text: 'Hasura Docs',

- link: 'https://hasura.io/docs/latest/graphql/core/index/',

- },

- {

- text: 'GraphQL API',

- link: 'https://hasura.io/graphql/',

- },

- ],

- },

- siteMetadata: {

- title: 'Build a data connector for Hasura DDN using TypeScript | Hasura',

- description:

- 'A concise tutorial that covers the fundamental concepts of building a data connector for Hasura DDN using TypeScript',

- ogImage: 'https://graphql-engine-cdn.hasura.io/learn-hasura/assets/social-media/twitter-card-hasura.png',

- docsLocation: 'https://github.com/hasura/learn-graphql/tree/master/tutorials/backend/hasura/tutorial-site/content',

- favicon: 'https://graphql-engine-cdn.hasura.io/learn-hasura/assets/homepage/hasura-favicon.png',

- },

- language: {

- code: 'en',

- name: 'English',

- translations: [

- {

- code: 'ja',

- name: 'Japanese',

- link: 'https://hasura.io/learn/ja/graphql/hasura/introduction/',

- },

- {

- code: 'es',

- name: 'Spanish',

- link: 'https://hasura.io/learn/es/graphql/hasura/introduction/',

- },

- {

- code: 'zh',

- name: 'Chinese',

- link: 'https://hasura.io/learn/zh/graphql/hasura/introduction/',

- },

- ],

- },

- newsletter: {

- ebookAvailable: true,

- },

-};

-

-module.exports = config;

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates.md

deleted file mode 100644

index c9e31e055..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates.md

+++ /dev/null

@@ -1,16 +0,0 @@

----

-title: "Aggregates"

-metaTitle: 'Aggregates | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-The section covers the implementation of basic aggregate functions (`star_count` and `column_count`) in an SQLite

-connector for Hasura.

-

-It involves modifying the `fetch_aggregates` function to handle these aggregates, constructing a

-SQL query that applies the correct count functions, and ensuring that the query respects any specified conditions like

-predicates, sorting, or pagination.

-

-The `star_count` aggregate counts all entries, while `column_count` counts non-null values in a specific column,

-with the option to count distinct values. The section concludes with testing the connector to ensure correct SQL

-generation and functionality.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates/1-video.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates/1-video.md

deleted file mode 100644

index 0813a092e..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates/1-video.md

+++ /dev/null

@@ -1,10 +0,0 @@

----

-title: "Video Walkthrough"

-metaTitle: 'Aggregates | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-import YoutubeEmbed from "../../src/YoutubeEmbed.js";

-

-

-

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates/2-fetch-aggregates.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates/2-fetch-aggregates.md

deleted file mode 100644

index fbbd52aa8..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/aggregates/2-fetch-aggregates.md

+++ /dev/null

@@ -1,219 +0,0 @@

----

-title: "Implementing Aggregates"

-metaTitle: 'Aggregates | Hasura DDN Typescript Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-Let's implement aggregates in our SQLite connector.

-

-Like we've done before, we won't implement aggregates in their full generality, and instead we're going to implement two

-types of aggregates, called `star_count` and `column_count`. Other aggregates like `SUM` and `MAX` that you know from

-Postgres will come under the umbrella of _custom aggregate functions_.

-

-If we take a look at our failing tests, we see that aggregate queries are indicated by the presence of the `aggregates`

-field in the query request body. Just like the `fields` property that we handled previously, each aggregate is named

-with a key, and has a `type`, in this case `star_count`. So we're going to handle aggregates very similarly to fields,

-by building up a SQL target list from these aggregates.

-

-```JSON

-{

- "collection": "albums",

- "query": {

- "aggregates": {

- "count": {

- "type": "star_count"

- }

- },

- "limit": 10

- },

- "arguments": {},

- "collection_relationships": {}

-}

-```

-

-[The NDC spec](https://hasura.github.io/ndc-spec/specification/queries/aggregates.html) says that each aggregate should

-act over the same set of rows that we consider when returning `rows`. That is, we should apply any predicates, sorting,

-pagination, and so on, and then apply the aggregate functions over the resulting set of rows.

-

-So assuming we have a function called `fetch_aggregates` which builds the SQL in this way, we can fill in the

-`aggregates` in the response.

-

-If the `query` function, add this line and amend the return type to include aggregates:

-

-```typescript

-const aggregates = request.query.aggregates && await fetch_aggregates(state, request);

-

-return [{ rows,aggregates }];

-```

-

-So the final query function becomes:

-```typescript

-async function query(configuration: Configuration, state: State, request: QueryRequest): Promise {

- console.log(JSON.stringify(request, null, 2));

-

- const rows = request.query.fields && await fetch_rows(state, request);

- const aggregates = request.query.aggregates && await fetch_aggregates(state, request);

-

- return [{ rows, aggregates }];

-}

-```

-

-Now let's start to fill in a `fetch_aggregates` helper function.

-

-We'll actually copy/paste the `fetch_rows` function and create a new function for handling aggregates. It would be

-possible to extract that commonality into a shared function, but arguably not worth it, since so much is already

-extracted out into small helper functions anyway.

-

-The first difference is the return type. Instead of `RowFieldValue`, we're going to return a value directly from the

-database, so let's change that to `unknown`.

-

-Next, we want to generate the target list using the requested aggregates, so let's change that.

-

-```typescript

-async function fetch_aggregates(state: State, request: QueryRequest): Promise<{ [k: string]: unknown }> {

- const target_list = [];

-

- for (const aggregateName in request.query.aggregates) {

- if (Object.prototype.hasOwnProperty.call(request.query.aggregates, aggregateName)) {

- const aggregate = request.query.aggregates[aggregateName];

- switch(aggregate.type) {

- case 'star_count':

-

- case 'column_count':

-

- case 'single_column':

- }

- }

- }

-

-}

-```

-

-For now, we'll handle the first two cases here.

-

-In the first case, we want to generate a target list element which uses the `COUNT` SQL aggregate function.

-

-```typescript

-// ...

-case 'star_count':

- target_list.push(`COUNT(1) AS ${aggregateName}`);

- break;

-// ...

-```

-

-In the second case, we'll also use the `COUNT` function, but this time, we're counting non-null values in a single column:

-

-```typescript

-// ...

-case 'column_count':

- target_list.push(`COUNT(${aggregate.column}) AS ${aggregateName}`);

- break;

-// ...

-```

-

-We also need to interpret the `distinct` property of the aggregate object, and insert the `DISTINCT` keyword if needed:

-

-```typescript

-// ...

-case 'column_count':

- target_list.push(`COUNT(${aggregate.distinct ? 'DISTINCT ' : ''}${aggregate.column}) AS ${aggregateName}`);

- break;

-// ...

-```

-

-We'll create a new generated SQL function within `fetch_aggregates()` to use the generated target list:

-

-```typescript

-// ...

-const sql = `SELECT ${target_list.join(", ")} FROM (

- (

- SELECT * FROM ${request.collection} ${where_clause} ${order_by_clause} ${limit_clause} ${offset_clause}

- )`;

-// ...

-```

-

-Note that we form the set of rows to be aggregated first, so that the limit and offset clauses are applied correctly.

-

-And instead of returning all rows, we're going to assume that we only get a single row back, so we can match on that and

-return the single row of aggregates:

-

-```typescript

-const result = await state.db.get(sql, ...parameters);

-

-if (result === undefined) {

- throw new InternalServerError("Unable to fetch aggregates");

-}

-

-return result;

-```

-

-Here's the full function:

-

-```typescript

-async function fetch_aggregates(state: State, request: QueryRequest): Promise<{

- [k: string]: unknown

-}> {

- const target_list = [];

-

- for (const aggregateName in request.query.aggregates) {

- if (Object.prototype.hasOwnProperty.call(request.query.aggregates, aggregateName)) {

- const aggregate = request.query.aggregates[aggregateName];

- switch(aggregate.type) {

- case 'star_count':

- target_list.push(`COUNT(1) AS ${aggregateName}`);

- break;

- case 'column_count':

- target_list.push(`COUNT(${aggregate.distinct ? 'DISTINCT ' : ''}${aggregate.column}) AS ${aggregateName}`);

- break;

- case 'single_column':

- throw new NotSupported("custom aggregates not yet supported");

- }

- }

- }

-

- const parameters: any[] = [];

-

- const limit_clause = request.query.limit == null ? "" : `LIMIT ${request.query.limit}`;

-

- const offset_clause = request.query.offset == null ? "" : `OFFSET ${request.query.offset}`;

-

- const where_clause = request.query.where == null ? "" : `WHERE ${visit_expression(parameters, request.query.where)}`;

-

- const order_by_clause = request.query.order_by == null ? "" : `ORDER BY ${visit_order_by_elements(request.query.order_by.elements)}`;

-

- const sql = `SELECT ${target_list.join(", ")} FROM (

- SELECT * FROM ${request.collection} ${where_clause} ${order_by_clause} ${limit_clause} ${offset_clause}

- )`;

-

- console.log(JSON.stringify({ sql, parameters }, null, 2));

-

- const result = state.db.get(sql, ...parameters);

-

- if (result === undefined) {

- throw new InternalServerError("Unable to fetch aggregates");

- }

-

- return result;

-}

-```

-

-That's it, so let's test our connector one more time, and hopefully see some passing tests this time.

-

-Remember to delete the snapshots first, so that we can generate new ones:

-

-```bash

-rm -rf snapshots

-```

-

-And re-run the tests with the snapshots directory:

-

-```shell

-ndc-test test --endpoint http://0.0.0.0:8100 --snapshots-dir snapshots

-```

-

-OR

-```shell

-cargo run --bin ndc-test -- test --endpoint http://localhost:8100 --snapshots-dir snapshots

-```

-

-Nice! We've now implemented the `star_count` and `column_count` aggregates, and we've seen how to generate SQL for them.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/conclusion.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/conclusion.md

deleted file mode 100644

index 1b39a5d86..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/conclusion.md

+++ /dev/null

@@ -1,17 +0,0 @@

----

-title: "Conclusion"

-metaTitle: 'Conclusion | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector for Hasura DDN'

----

-

-Well done for finishing the tutorial! We hope you enjoyed it and learned about the basics of building a simple data

-connector for Hasura DDN.

-

-Obviously, there is a lot more to building a data connector than what we covered in this tutorial. We encourage you to

-explore the [Hasura DDN connector spec](https://hasura.github.io/ndc-spec/specification/) to learn more about the

-capabilities of the Hasura DDN connector API.

-

-And remember to check out the [Hasura DDN connectors hub](https://hasura.io/connectors#connectors-list) for a growing

-list of other connectors built by the both Hasura and the community.

-

-There will be more courses coming soon, so stay tuned!

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started.md

deleted file mode 100644

index c4483119a..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started.md

+++ /dev/null

@@ -1,19 +0,0 @@

----

-title: "Get Started"

-metaTitle: 'Get Started | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-This video series focuses on building a native data connector for Hasura in Typescript, which enables the integration of

-various data sources into your Hasura Supergraph.

-

-This initial section goes through the basic setup and scaffolding of a connector using the Hasura TypeScript

-connector SDK and a local SQLite database.

-

-It covers the creation of types for configurations and state, and the implementation of essential functions

-following the SDK guidelines.

-

-We also introduce a test suite and shows the integration of the connector with Hasura,

-demonstrating the process through a practical example.

-

-Let's get started...

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/01-video.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/01-video.md

deleted file mode 100644

index 143a90645..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/01-video.md

+++ /dev/null

@@ -1,10 +0,0 @@

----

-title: "Video Walkthrough"

-metaTitle: 'Get Started | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-import YoutubeEmbed from "../../src/YoutubeEmbed.js";

-

-

-

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/02-clone.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/02-clone.md

deleted file mode 100644

index 8ba8dd9ee..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/02-clone.md

+++ /dev/null

@@ -1,43 +0,0 @@

----

-title: "Clone the Repo"

-metaTitle: 'Clone the Repo | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-You can use this course by watching the videos and reading, but you can also

-[clone the finished repo](https://github.com/hasura/ndc-typescript-learn-course) to see the finished result in

-action straight away. Or, to follow along starting from a skeleton project, clone the repo and checkout the

-`follow-along` branch:

-

-```shell

-# If using GitHub with SSH

-git clone git@github.com:hasura/ndc-typescript-learn-course.git

-# OR if using GitHub with HTTPS

-git clone https://github.com/hasura/ndc-typescript-learn-course.git

-```

-

-To checkout the `follow-along` branch:

-```shell

-git checkout follow-along

-```

-

-Then install the dependencies:

-```shell

-npm install

-```

-

-You can build and run the connector, when you need to, with:

-```shell

-npm run build && node dist/index.js serve --configuration configuration.json

-```

-

-However, you can run nodemon to watch for changes and rebuild automatically:

-

-```shell

-npm run watch

-```

-

-[//]: # (TODO: Cannot find more information about the configuration file creation and usage.)

-

-_Note: the configuration.json file is a pre-configured file which gives the connector information about the data

-source._

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/03-basics.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/03-basics.md

deleted file mode 100644

index 9b829ce71..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/03-basics.md

+++ /dev/null

@@ -1,45 +0,0 @@

----

-title: "Basic Setup"

-metaTitle: 'Basic Setup | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-Let's set up the scaffolding for our connector, and we'll see the first queries start to work. We'll also start to

-develop a test suite, and see our connector running in Hasura.

-

-For now, we'll just handle the most basic queries, but later, we'll start to fill in some of the gaps in our

-implementation, and see more queries return results correctly.

-

-[//]: # (TODO: This: "We'll also cover topics such as metrics, connector configuration, error reporting, and tracing.

-" is not implemented - possibly Phil Freeman will do this in the future?)

-

-The data source you'll be targeting is a SQLite database running on your local machine, and we'll be using the Hasura

-[TypeScript connector SDK](https://github.com/hasura/ndc-sdk-typescript).

-

-If you've cloned the repo in the previous step, you can follow along with the code in this tutorial.

-

-Let's start by following the [SDK guidelines](https://github.com/hasura/ndc-sdk-typescript) and use the `start`

-function which take a `connector` of type `Connector`.

-

-## Start

-

-In your `src/index.ts` file, add the following:

-

-```typescript

-const connector: Connector = {};

-

-start(connector);

-```

-

-We will also need some imports over the course of the tutorial. Paste these at the top of your index.ts file:

-

-```typescript

-import sqlite3 from 'sqlite3';

-import { Database, open } from 'sqlite';

-import { BadRequest, CapabilitiesResponse, CollectionInfo, ComparisonValue, Connector, ExplainResponse, InternalServerError, MutationRequest, MutationResponse, NotSupported, ObjectField, ObjectType, OrderByElement, QueryRequest, QueryResponse, RowFieldValue, ScalarType, SchemaResponse, start } from "@hasura/ndc-sdk-typescript";

-import { JSONSchemaObject } from "@json-schema-tools/meta-schema";

-import { ComparisonTarget, Expression } from '@hasura/ndc-sdk-typescript/dist/generated/typescript/QueryRequest';

-```

-

-You'll notice that your IDE will complain about the `connector` object not having the correct type, and

-`RawConfiguration, Configuration, State` all being undefined. Let's fix that in the next section...

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/04-configuration-and-state.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/04-configuration-and-state.md

deleted file mode 100644

index 2e0298bec..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/04-configuration-and-state.md

+++ /dev/null

@@ -1,52 +0,0 @@

----

-title: "Configuration and State"

-metaTitle: 'Configuration and State | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-We need to fill in implementations for each of the required functions, but we won't need all of these to work just yet.

-

-First, you'll see that we define three types: `RawConfiguration`, `Configuration`, and `State`.

-

-Let's define those now above the `connector` and `start` function:

-

-```typescript

-type RawConfiguration = {

- tables: TableConfiguration[];

-};

-

-type TableConfiguration = {

- tableName: string;

- columns: { [k: string]: Column };

-};

-

-type Column = {};

-

-type Configuration = RawConfiguration;

-

-type State = {

- db: Database;

-};

-```

-

-`RawConfiguration` is the type of configuration that the user will see. By convention, this configuration should be

-enough to reproducibly determine the connector's schema, so for our SQLite connector, we configure the connector

-with an array of tables that we want to expose. Each of these table types in `TableConfiguration` is defined by its

-name and a list of columns.

-

-`Column`s don't have any specific configuration yet, but we leave an empty object type here because we might want to

-capture things like column types later on.

-

-The `Configuration` type is supposed to be a validated version of the raw configuration, but for our purposes, we'll

-reuse the same type.

-

-[//]: # (TODO: What does it mean to validate the configuration? What does it mean to have a validated configuration?)

-

-## State

-

-The `State` type is for things like connection pools, handles, or any non-serializable state that gets allocated on

-startup, and which lives for the lifetime of the connector. For our connector, we need to keep a handle to our SQLite

-database.

-

-Cool, so now that we've got our types defined, we can fill in the function definitions which the connector requires

-in order to interact with our SQLite database and Hasura DDN. Let's do that in the next step.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/05-function-definitions.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/05-function-definitions.md

deleted file mode 100644

index 4ab53897b..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/05-function-definitions.md

+++ /dev/null

@@ -1,88 +0,0 @@

----

-title: "Function Definitions"

-metaTitle: 'Function Definitions | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-Now let's fill in some function definitions, these are the functions required to provide to the connector to satisfy

-the Hasura connector specification, and we'll be implementing them as we go through the course.

-

-Copy and paste the following required functions into the `src/index.ts` file. Note that the amended `connector` is

-also included at the bottom, overwriting the previous connector definition with this which takes these functions as

-arguments.

-

-```typescript

-function get_raw_configuration_schema(): JSONSchemaObject {

- throw new Error("Function not implemented.");

-}

-

-function get_configuration_schema(): JSONSchemaObject {

- throw new Error("Function not implemented.");

-}

-

-function make_empty_configuration(): RawConfiguration {

- throw new Error("Function not implemented.");

-}

-

-async function update_configuration(configuration: RawConfiguration): Promise {

- throw new Error("Function not implemented.");

-}

-

-async function fetch_metrics(configuration: RawConfiguration, state: State): Promise {

- throw new Error("Function not implemented.");

-}

-

-async function health_check(configuration: RawConfiguration, state: State): Promise {

- throw new Error("Function not implemented.");

-}

-

-async function explain(configuration: RawConfiguration, state: State, request: QueryRequest): Promise {

- throw new Error("Function not implemented.");

-}

-

-async function mutation(configuration: RawConfiguration, state: State, request: MutationRequest): Promise {

- throw new Error("Function not implemented.");

-}

-

-// Implement these 5 functions below for this course

-

-async function validate_raw_configuration(configuration: RawConfiguration): Promise {

- throw new Error("Function not implemented.");

-}

-

-async function try_init_state(configuration: RawConfiguration, metrics: unknown): Promise {

- throw new Error("Function not implemented.");

-}

-

-function get_capabilities(configuration: RawConfiguration): CapabilitiesResponse {

- throw new Error("Function not implemented.");

-}

-

-async function get_schema(configuration: RawConfiguration): Promise {

- throw new Error("Function not implemented.");

-}

-

-async function query(configuration: RawConfiguration, state: State, request: QueryRequest): Promise {

- throw new Error("Function not implemented.");

-}

-

-const connector: Connector = {

- get_raw_configuration_schema,

- get_configuration_schema,

- make_empty_configuration,

- update_configuration,

- validate_raw_configuration,

- try_init_state,

- fetch_metrics,

- health_check,

- get_capabilities,

- get_schema,

- explain,

- mutation,

- query

-};

-```

-

-Ok, moving on swiftly, for this course we will only need to implement the last 5 functions of

-`validate_raw_configuration`, `try_init_state`, `get_capabilities`, `get_schema`, and `query`, in order to get a

-basic working connector. Let's do that now.

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/06-implementation.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/06-implementation.md

deleted file mode 100644

index a650bc36a..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/06-implementation.md

+++ /dev/null

@@ -1,52 +0,0 @@

----

-title: "Implementation"

-metaTitle: 'Implementation | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-Right now, we only need to implement five required functions:

-- `validate_raw_configuration` - which validates the configuration provided by the user.

-- `try_init_state` - which initializes our database connection.

-- `get_capabilities` - which returns the capabilities of our connector as per the spec.

-- `get_schema` - which returns a spec-compatible schema containing our tables and columns.

-- `query` - which actually responds to query requests.

-

-We'll skip configuration validation entirely for now, so in the `validate_raw_configuration` function which you

-pasted in the previous step, we'll just return the configuration. Edit it as follows:

-

-[//]: # (TODO: Need to understand what this is)

-

-```typescript

-async function validate_raw_configuration(configuration: RawConfiguration): Promise {

- return configuration;

-}

-```

-

-To initialize our state, which in our case contains a connection to the database, we'll use the `open` function to

-open a connection to it, and store the resulting connection object in our state by returning it:

-

-```typescript

-async function try_init_state(configuration: RawConfiguration, metrics: unknown): Promise {

- const db = await open({

- filename: 'database.db',

- driver: sqlite3.Database

- });

-

- return { db };

-}

-```

-

-[//]: # (TODO: Link to the relevant part of the spec)

-Our capabilities response will be very simple, because we won't support many capabilities yet. We just return the

-version range of the specification that we are compatible with, and the basic `query` capability.

-

-```typescript

-function get_capabilities(configuration: RawConfiguration): CapabilitiesResponse {

- return {

- versions: "^0.1.0",

- capabilities: {

- query: {}

- }

- }

-}

-```

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/07-implement-get-schema-and-query.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/07-implement-get-schema-and-query.md

deleted file mode 100644

index f165fe387..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/07-implement-get-schema-and-query.md

+++ /dev/null

@@ -1,207 +0,0 @@

----

-title: "The Get Schema Function"

-metaTitle: 'The Get Schema Function | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-`get_schema` is the first interesting function. It needs to return a spec-compatible schema containing our tables and

-columns.

-

-In it, we're going to define scalar types, and an object type and a collection for each table in the configuration.

-For this course, we're going to ignore the `functions` and `procedures` fields, but we'll cover those in a later

-courses.

-

-It takes the RawConfiguration as an argument, and returns an abject in the format of the `SchemaResponse`

-shape eg:

-

-```typescript

-export interface SchemaResponse {

- /**

- * Collections which are available for queries and/or mutations

- */

- collections: CollectionInfo[];

- /**

- * Functions (i.e. collections which return a single column and row)

- */

- functions: FunctionInfo[];

- /**

- * A list of object types which can be used as the types of arguments, or return types of procedures. Names should not overlap with scalar type names.

- */

- object_types: {

- [k: string]: ObjectType;

- };

- /**

- * Procedures which are available for execution as part of mutations

- */

- procedures: ProcedureInfo[];

- /**

- * A list of scalar types which will be used as the types of collection columns

- */

- scalar_types: {

- [k: string]: ScalarType;

- };

-}

-```

-

-Let's first define the scalar types. In fact, we're only going to define one, called `any` as a string literal:

-

-```typescript

-async function get_schema(configuration: RawConfiguration): Promise {

- let scalar_types: { [k: string]: ScalarType } = {

- 'any': {

- aggregate_functions: {},

- comparison_operators: {},

- update_operators: {},

- }

- };

-}

-```

-[//]: # (TODO: This is confusing name because of the any type in typescript)

-`any` is a generic scalar type that we'll use as the type of all of our columns. It doesn't have any comparison

-operators or aggregates defined. Later, when we talk about those features, we'll need to split this type up into several

-different scalar types.

-

-Now let's define the object types.

-

-```typescript {5}

-async function get_schema(configuration: RawConfiguration): Promise {

- let scalar_types: { [k: string]: ScalarType } = {

- 'any': {

- aggregate_functions: {},

- comparison_operators: {},

- update_operators: {},

- }

- };

-

- let object_types: { [k: string]: ObjectType } = {};

-

- for (const table of configuration.tables) {

- let fields: { [k: string]: ObjectField } = {};

-

- for (const columnName in table.columns) {

- fields[columnName] = {

- type: {

- type: 'named',

- name: 'any'

- }

- };

- }

-

- object_types[table.tableName] = {

- fields

- };

- }

-}

-```

-

-Here we create one `ObjectType` definition for each table in the configuration.

-

-Notice that the name of the object type will be the name of the table, and each column uses the `any` type that we just

-defined.

-

-## Collections

-

-Now let's define the collections:

-

-```typescript

-async function get_schema(configuration: RawConfiguration): Promise {

- let scalar_types: { [k: string]: ScalarType } = {

- 'any': {

- aggregate_functions: {},

- comparison_operators: {},

- update_operators: {},

- }

- };

-

- let object_types: { [k: string]: ObjectType } = {};

-

- for (const table of configuration.tables) {

- let fields: { [k: string]: ObjectField } = {};

-

- for (const columnName in table.columns) {

- fields[columnName] = {

- type: {

- type: 'named',

- name: 'any'

- }

- };

- }

-

- object_types[table.tableName] = {

- fields

- };

- }

-

- let collections: CollectionInfo[] = configuration.tables.map((table) => {

- return {

- arguments: {},

- name: table.tableName,

- deletable: false,

- foreign_keys: {},

- uniqueness_constraints: {},

- type: table.tableName,

- };

- });

-}

-```

-

-Again, we define one collection per table in the configuration, and we use the object type with the same name that we

-just defined.

-

-Now we can put the schema response together:

-

-```typescript

-async function get_schema(configuration: RawConfiguration): Promise {

- let scalar_types: { [k: string]: ScalarType } = {

- 'any': {

- aggregate_functions: {},

- comparison_operators: {},

- update_operators: {},

- }

- };

-

- let object_types: { [k: string]: ObjectType } = {};

-

- for (const table of configuration.tables) {

- let fields: { [k: string]: ObjectField } = {};

-

- for (const columnName in table.columns) {

- fields[columnName] = {

- type: {

- type: 'named',

- name: 'any'

- }

- };

- }

-

- object_types[table.tableName] = {

- fields

- };

- }

-

- let collections: CollectionInfo[] = configuration.tables.map((table) => {

- return {

- arguments: {},

- name: table.tableName,

- deletable: false,

- foreign_keys: {},

- uniqueness_constraints: {},

- type: table.tableName,

- };

- });

-

- return {

- functions: [],

- procedures: [],

- collections,

- object_types,

- scalar_types,

- };

-}

-```

-

-As mentioned before, we aren't defining `functions` or `procedures`, but we'll cover those features later in the

-series.

-

-Now we almost have a working connector apart from actually querying the database. Let's set up some testing so we

-know what we're working to and when we're done.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/08-testing.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/08-testing.md

deleted file mode 100644

index c424da060..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/08-testing.md

+++ /dev/null

@@ -1,75 +0,0 @@

----

-title: "Testing"

-metaTitle: 'Testing | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-So we have one more function to define, which is the query function, but before we do, let's talk about tests. The [NDC

-specification repository](https://github.com/hasura/ndc-spec/) provides a

-[test runner executable](https://github.com/hasura/ndc-spec/tree/main/ndc-test) called `ndc-test`, which can be used to

-implement a test suite for a connector.

-

-We can also use `ndc-test` to run some automatic tests and validate the work we've done so far. Let's compile and run

-our connector, and then use the test runner with the running connector.

-

-Back in your `ndc-typescript-learn-course` directory that we cloned during setup, you have a `configuration.json` file

-which you can use to run the connector against your sample database.

-

-[//]: # (TODO more info about configuration.json)

-

-[//]: # (In this context of this course you need not worry about the configuration file or how it was created, although it is )

-[//]: # (a core feature of Hasura DDN. You can read more about it in the )

-[//]: # ([Hasura DDN quickstart](https://hasura.io/docs/3.0/local-dev/) and in the )

-[//]: # ([supergraph modeling](https://hasura.io/docs/3.0/supergraph-modeling/overview/) section of docs. )

-

-[//]: # (TODO - document the test runner better in the spec repo)

-Now, let's run the tests. (You will need to have the

-[ndc test runner](https://github.com/hasura/ndc-spec/tree/main/ndc-test) installed on

-your machine.)

-

-```shell

-ndc-test test --endpoint http://localhost:8100

-```

-

-OR

-

-```shell

-cargo run --bin ndc-test -- test --endpoint http://localhost:8100

-````

-

-Some tests fail, but we expected them to fail, but we can already see that our schema response is good.

-

-```text

-cargo run --bin ndc-test -- test --endpoint http://localhost:8100

- Finished dev [unoptimized + debuginfo] target(s) in 0.21s

- Running `/Users/me/ndc-spec/target/debug/ndc-test test --endpoint 'http://localhost:8100'`

-├ Capabilities ...

-│ ├ Fetching /capabilities ... OK

-│ ├ Validating capabilities ... OK

-├ Schema ...

-│ ├ Fetching schema ... OK

-│ ├ Validating schema ...

-│ │ ├ object_types ... OK

-│ │ ├ Collections ...

-│ │ │ ├ albums ...

-│ │ │ │ ├ Arguments ... OK

-│ │ │ │ ├ Collection type ... OK

-│ │ │ ├ artists ...

-│ │ │ │ ├ Arguments ... OK

-│ │ │ │ ├ Collection type ... OK

-│ │ ├ Functions ...

-│ │ │ ├ Procedures ...

-├ Query ...

-│ ├ albums ...

-│ │ ├ Simple queries ...

-│ │ │ ├ Select top N ... FAIL

-│ │ ├ Aggregate queries ...

-│ │ │ ├ star_count ... FAIL

-│ ├ artists ...

-│ │ ├ Simple queries ...

-│ │ │ ├ Select top N ... FAIL

-│ │ ├ Aggregate queries ...

-│ │ │ ├ star_count ... FAIL

-```

-

-In the next section, we'll start to implement the query function, and see some of these tests pass.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/09-query-function.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/09-query-function.md

deleted file mode 100644

index 0f9ccd5b9..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/09-query-function.md

+++ /dev/null

@@ -1,202 +0,0 @@

----

-title: "The Query Function"

-metaTitle: 'The Query Function | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-Let's modify our query function to log out the request it receives, and this will give us a goal to work towards.

-

-```typescript

-async function query(configuration: RawConfiguration, state: State, request: QueryRequest): Promise {

- console.log(JSON.stringify(request, null, 2));

- throw new Error("Function not implemented.");

-}

-```

-

-Let's run the tests again.

-

-```shell

-rm -rf snapshots

-```

-

-```shell

-ndc-test test --endpoint http://0.0.0.0:8100 --snapshots-dir snapshots

-```

-

-OR

-```shell

-cargo run --bin ndc-test -- test --endpoint http://localhost:8100 --snapshots-dir snapshots

-```

-

-In the logs of the app, we can see the request

-that was sent. It identifies the name of the collection, and a query object to run. The query has a list of fields

-to retrieve, and a limit of 10 rows. With this as a guide, we can start to implement our query function in the next

-section.

-

-```text

-...

-{

- "collection": "albums",

- "query": {

- "fields": {

- "artist_id": {

- "type": "column",

- "column": "artist_id"

- },

- "id": {

- "type": "column",

- "column": "id"

- },

- "title": {

- "type": "column",

- "column": "title"

- }

- },

- "limit": 10

- },

- "arguments": {},

- "collection_relationships": {}

-}

-...

-```

-

-The query function is going to delegate to a function called `fetch_rows`, but only when rows are requested, which is

-indicated by the presence of the query fields property. Let's do that:

-

-```typescript

-async function query(configuration: RawConfiguration, state: State, request: QueryRequest): Promise {

- console.log(JSON.stringify(request, null, 2));

- const rows = request.query.fields && await fetch_rows(state, request);

- return [{ rows }];

-}

-```

-

-Later, we'll also implement aggregates here.

-

-Let's define the `fetch_rows` function the `query` function is delegating to:

-

-```typescript

-async function fetch_rows(state: State, request: QueryRequest): Promise<{ [k: string]: RowFieldValue }[]> {

- const fields = [];

-

- for (const fieldName in request.query.fields) {

- if (Object.prototype.hasOwnProperty.call(request.query.fields, fieldName)) {

- const field = request.query.fields[fieldName];

- switch (field.type) {

- case 'column':

- fields.push(`${field.column} AS ${fieldName}`);

- break;

- case 'relationship':

- throw new Error("Relationships are not supported");

- }

-

- }

- }

-

- if (request.query.order_by != null) {

- throw new NotSupported("Sorting is not supported");

- }

-

- const limit_clause = request.query.limit == null ? "" : `LIMIT ${request.query.limit}`;

-

- const offset_clause = request.query.offset == null ? "" : `OFFSET ${request.query.offset}`;

-

- const sql = `SELECT ${fields.join(", ")} FROM ${request.collection} ${limit_clause} ${offset_clause}`;

-

- console.log(JSON.stringify({ sql }, null, 2));

-

- return state.db.all(sql);

-}

-```

-This function breaks down the request that we saw earlier and produces SQL with a basic shape. Here is what `fetch_rows`

-does:

-

-1. It initializes an empty array `fields` to store the fields that will be selected in the SQL query.

-

-2. It iterates over the `fields` property of the `request.query` object. Each field represents a column in the database

-table that needs to be fetched.

-

-3. For each field, it checks the `type` of the field. If the type is 'column', it adds the column name to the `fields`

-array. If the type is 'relationship', it throws an error because relationships are not supported in this context.

-

-4. It checks if the `request.query.order_by` is not null. If it is not null, it throws an error because sorting is

-not supported.

-

-5. It generates the `LIMIT` and `OFFSET` clauses for the SQL query based on the `request.query.limit` and

-`request.query.offset` values.

-

-6. It constructs the SQL query string using the `fields` array, the collection name (which corresponds to the table

-name in the database), and the `LIMIT` and `OFFSET` clauses.

-

-7. It logs the constructed SQL query.

-

-8. Finally, it executes the SQL query on the database and returns the result. The database connection is accessed

-through the `db` object in the state.

-

-Notice that we don't fetch more data than we need, either in terms of rows or columns. That's the benefit of

-connectors - we get to push down the query execution to the data sources themselves.

-

-

-## Test again

-

-Now let's see it work in the test runner. We'll rebuild and restart the connector, and run the tests again.

-

-```text

-cargo run --bin ndc-test -- test --endpoint http://localhost:8100

- Finished dev [unoptimized + debuginfo] target(s) in 0.29s

- Running `/Users/me/ndc-spec/target/debug/ndc-test test --endpoint 'http://localhost:8100'`

-├ Capabilities ...

-│ ├ Fetching /capabilities ... OK

-│ ├ Validating capabilities ... OK

-├ Schema ...

-│ ├ Fetching schema ... OK

-│ ├ Validating schema ...

-│ │ ├ object_types ... OK

-│ │ ├ Collections ...

-│ │ │ ├ albums ...

-│ │ │ │ ├ Arguments ... OK

-│ │ │ │ ├ Collection type ... OK

-│ │ │ ├ artists ...

-│ │ │ │ ├ Arguments ... OK

-│ │ │ │ ├ Collection type ... OK

-│ │ ├ Functions ...

-│ │ │ ├ Procedures ...

-├ Query ...

-│ ├ albums ...

-│ │ ├ Simple queries ...

-│ │ │ ├ Select top N ... OK

-│ │ │ ├ Predicates ... OK

-│ │ │ ├ Sorting ... FAIL

-│ │ ├ Aggregate queries ...

-│ │ │ ├ star_count ... FAIL

-│ ├ artists ...

-│ │ ├ Simple queries ...

-│ │ │ ├ Select top N ... OK

-│ │ │ ├ Predicates ... OK

-│ │ │ ├ Sorting ... FAIL

-│ │ ├ Aggregate queries ...

-│ │ │ ├ star_count ... FAIL

-```

-

-[//]: # (TODO - why are predicates passing here? They have not been implemented)

-

-Of course, we still see some tests fail, but now we've made some progress because the most basic tests are passing.

-

-We can get the test runner to write these expectations out as snapshot files to disk by adding the `--snapshots-dir`

-argument.

-

-```shell

-ndc-test test --endpoint http://0.0.0.0:8100 --snapshots-dir snapshots

-```

-

-OR

-```shell

-cargo run --bin ndc-test -- test --endpoint http://localhost:8100 --snapshots-dir snapshots

-```

-

-Here we can build up a library of **query requests** and **expected responses** that can be replayed in order to make

-sure that our connector continues to exhibit the same behavior over time.

-

-Finally, let's see what this connector looks like when we add it to our Hasura graph, let's check that out in the

-next section.

-

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/10-cloud-integration.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/10-cloud-integration.md

deleted file mode 100644

index 2b4c9daa7..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/get_started/10-cloud-integration.md

+++ /dev/null

@@ -1,77 +0,0 @@

----

-title: "Cloud Integration"

-metaTitle: 'Cloud Integration | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-Going over the process of creating and deploying a project to Hasura DDN is beyond the scope of this course and

-we don't want to go too off-track, but is covered in the Hasura Docs which you can

-[check out here](https://hasura.io/docs/3.0/local-dev/).

-

-We have created and included a Hasura DDN metadata configuration in the

-[repo for this course](https://github.com/hasura/ndc-typescript-learn-course/blob/main/hasura/) which you can use to

-test your API, query it and see album and artist results - all powered by your connector.

-

-## Install Hasura CLI and start a tunnel

-

-To run this connector you will need to [install the Hasura3 CLI](https://hasura.io/docs/3.0/cli/installation/) and

-create a tunnel so that Hasura DDN can reach your local machine. You can do this by first starting the tunnel daemon,

-with `hasura3 daemon start` and then creating a tunnel with `hasura3 tunnel create localhost:8100`.

-

-The command will return a hostname and port which you should enter into the

-`/hasura/subgraphs/default/dataconnectors/db.hml` file in place of where you see the value for the connector URL. Eg:

-

-```yaml

-definition:

- name: my_sqlite_connector

- url:

- singleUrl:

- value: https://tunnel-url-here

-```

-

-## Create a project on Hasura DDN

-

-In the console run `hasura3 project create` to create a project on Hasura DDN and paste that project name in

-the `hasura/hasura.yaml` file in the `project` field. Eg:

-

-```yaml

-project: my-project-name

-```

-

-## Create a build

-

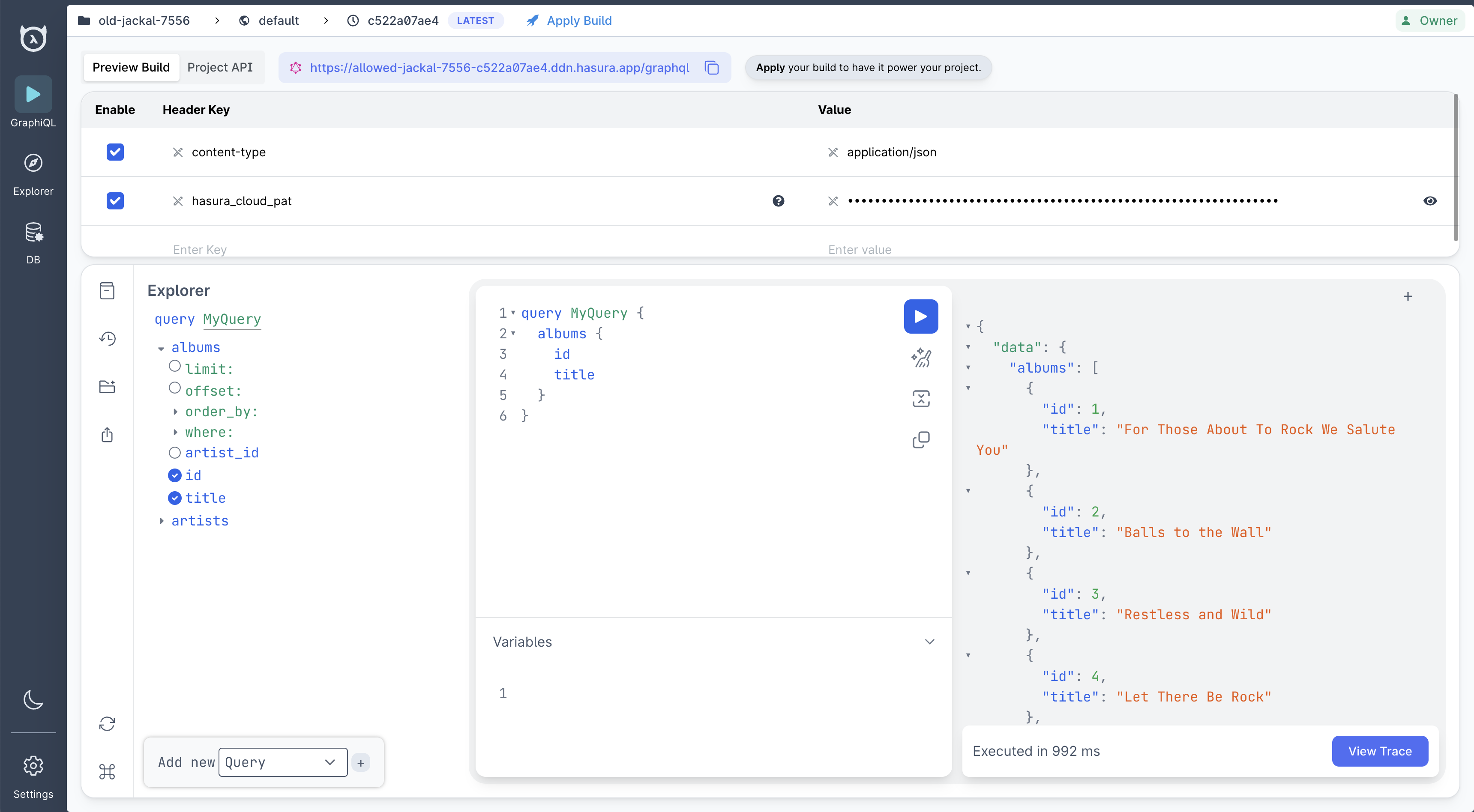

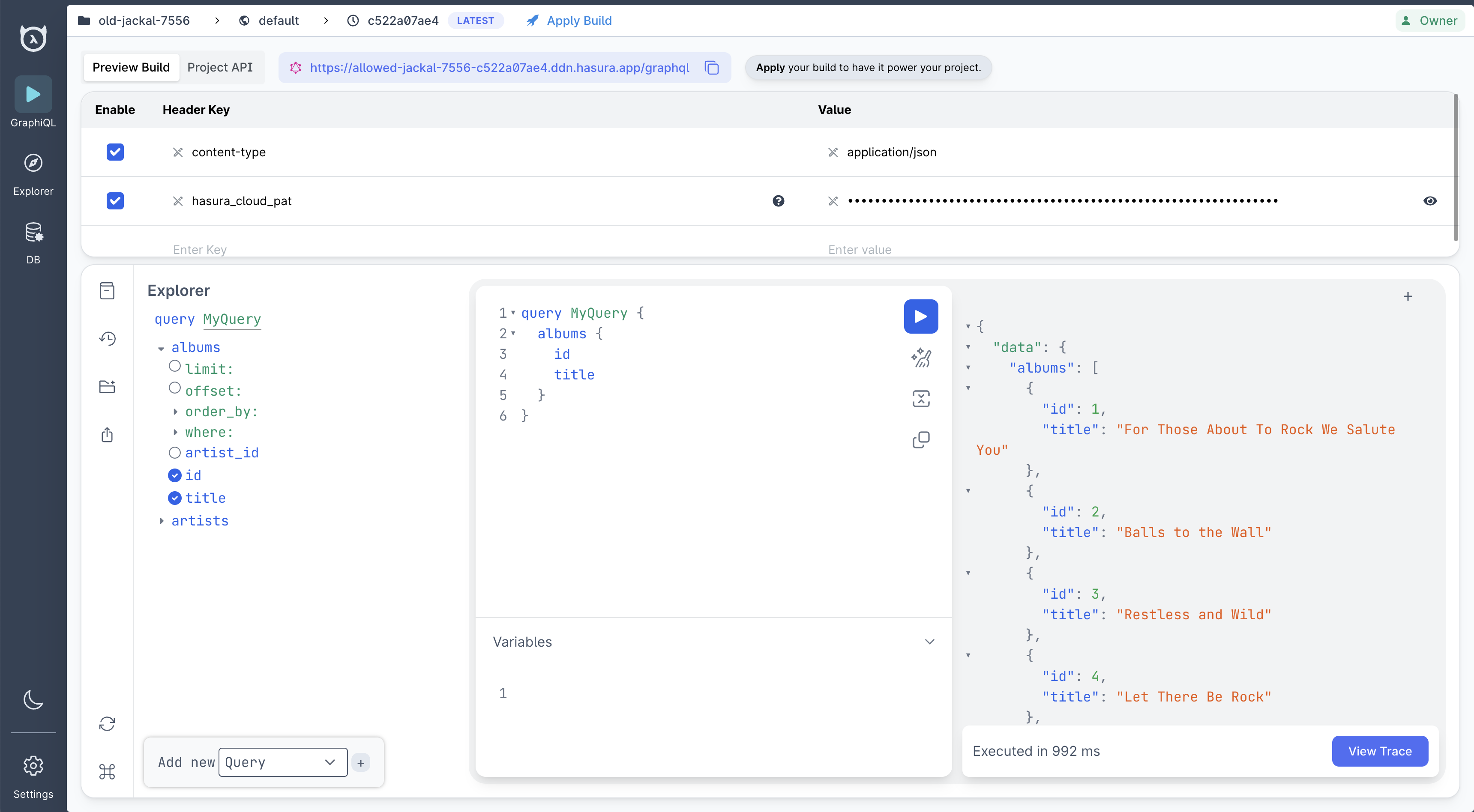

-Now we can create a build and apply it with `hasura3 watch`. This will return a table like the following:

-

-```text

-+---------------+---------------------------------------------------------------+

-| Build ID | 843e6596-9007-4645-abe2-13385da07611 |

-+---------------+---------------------------------------------------------------+

-| Build Version | c522a07ae4 |

-+---------------+---------------------------------------------------------------+

-| Build URL | https://old-jackal-7556-c522a07ae4.ddn.hasura.app/graphql |

-+---------------+---------------------------------------------------------------+

-| Project Id | c3e83cb8-96c5-4016-be0c-c9f4d35e63f7 |

-+---------------+---------------------------------------------------------------+

-| Console Url | https://console.hasura.io/project/old-jackal-7556/graphql |

-+---------------+---------------------------------------------------------------+

-| FQDN | old-jackal-7556-c522a07ae4.ddn.hasura.app |

-+---------------+---------------------------------------------------------------+

-| Environment | default |

-+---------------+---------------------------------------------------------------+

-| Description | Watch build Tue, 23 Jan 2024 |

-| | 20:33:28 +07 |

-+---------------+---------------------------------------------------------------+

-+-----------------+-------------------------------------------------+

-| GraphQL API URL | https://trusty-colt-7733.ddn.hasura.app/graphql |

-+-----------------+-------------------------------------------------+

-```

-

-Paste the Console URL in your browser to see a GraphiQL console for your Hasura graph.

-

-Now you can run a GraphQL query against your data to see it in action.

-

-

-

-Success!

-

-In the next section, we'll start to fill out some of the missing query functionality, beginning with `where` clauses.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/introduction.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/introduction.md

deleted file mode 100644

index f805cef11..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/introduction.md

+++ /dev/null

@@ -1,63 +0,0 @@

----

-title: "Intro - Let's Build a Connector!"

-metaTitle: 'Course Introduction | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector for Hasura DDN'

----

-

-In this course we will go through the process of creating a Hasura DDN data connector in TypeScript step-by-step.

-

-A data connector in Hasura DDN is an agent which allows you to connect Hasura to any arbitrary data source.

-

-## What will we be building? {#what-will-we-be-building}

-

-We will build a basic connector to an [SQLite](https://www.sqlite.org/index.html) file-system database which you can

-run locally on your machine.

-

-This will familiarize you with the process of creating a connector to the Hasura DDN specification. You can then

-take the concepts you've learned and apply it to any data source you'd like to integrate with Hasura DDN.

-

-## How do I follow along? {#how-do-I-follow-along}

-

-You can watch the walkthrough videos in each section and also follow along with the code in this tutorial by first

-cloning the [repo](/get-started/2-clone/).

-

-The YouTube playlist for all the videos is

-[here](https://www.youtube.com/playlist?list=PLTRTpHrUcSB_WmbGviXZUx0z-jVZXm4Yc).

-

-## What will I learn? {#what-will-i-learn}

-

-You'll learn the fundamentals of creating a basic data connector with the

-[TypeScript Data Connector SDK](https://github.com/hasura/ndc-sdk-typescript). You can then take what you've learned

-into creating a data connector for any data source.

-

-## What do I need to take this tutorial? {#what-do-i-need-to-take-this-tutorial}

-

-It is recommended that you first review the [Hasura NDC Specification](http://hasura.github.io/ndc-spec/), at least to

-gain a basic familiarity with the concepts, but this course is intended to be complementary to the spec.

-

-The dependencies required to follow along here are minimal:

-- you will need [Node](https://nodejs.org/en) with `npm` so that you can run the TypeScript compiler.

-- If you'd like to follow along using the same test-driven approach, then you will also need a working `ndc-test`

- executable on your `PATH`.

-

-`ndc-test` can be installed using the Rust toolchain from the [`ndc-spec`](https://github.com/hasura/ndc-spec) repository.

-

-## How long will this tutorial take? {#how-long-will-this-tutorial-take}

-

-About an hour.

-

-## Additional Resources {#additional-resources}

-

-- The [NDC Specification](https://hasura.github.io/ndc-spec/specification/) details the specification for data connectors which work with Hasura DDN.

-- [Reference Implementation with Tutorial](https://github.com/hasura/ndc-spec/blob/main/ndc-reference/README.md)

-- SDKs for data connectors built with Rust, and the one which we will be using in this course, TypeScript.

- - [NDC Rust SDK](https://github.com/hasura/ndc-hub)

- - [NDC Typescript SDK](https://github.com/hasura/ndc-sdk-typescript)

-- Examples of existing native data connectors built by Hasura.

- - [Clickhouse](https://github.com/hasura/ndc-clickhouse) (Rust)

- - [QDrant](https://github.com/hasura/ndc-qdrant) (Typescript)

- - [Deno](https://github.com/hasura/ndc-typescript-deno) (Typescript)

-- [Hasura DDN Docs](https://hasura.io/docs/3.0/index/)

-- [Hasura DDN Hub](https://hasura.io/connectors#connectors-list) - This is where data connectors are published and can be installed

- into your Hasura DDN instance.

-- [Hasura DDN Open Source Engine codebase](https://github.com/hasura/graphql-engine/tree/master/v3)

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering.md

deleted file mode 100644

index 0c5e14cea..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering.md

+++ /dev/null

@@ -1,9 +0,0 @@

-In this section we dive into implementing basic sorting functionality

-

-The focus is on transforming the `ORDER BY` clause of SQL queries into a workable format within our Typescript

-connector.

-

-We will integrate a new `ORDER BY` clause into our SQL template. This involves constructing a function,

-`visit_order_by_elements`, to interpret the `order_by` property from the query request. This function efficiently

-processes a list of order-by elements, each specifying an expression to sort by, along with the direction of sorting,

-either ascending or descending.

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering/1-video.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering/1-video.md

deleted file mode 100644

index 6c0eaf782..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering/1-video.md

+++ /dev/null

@@ -1,9 +0,0 @@

----

-title: "Video Walkthrough"

-metaTitle: 'Ordering | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-import YoutubeEmbed from "../../src/YoutubeEmbed.js";

-

-

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering/2-order_by.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering/2-order_by.md

deleted file mode 100644

index e3c2200a4..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/ordering/2-order_by.md

+++ /dev/null

@@ -1,109 +0,0 @@

----

-title: "Order By"

-metaTitle: 'Order By | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-

-Now that we've implemented basic predicates and started to see some test cases passing, we'll now implement basic

-sorting, and see more of our tests turn green.

-

-Implementing sorting is much simpler than implementing predicates, because there is no recursive structure to process.

-Instead, we have a simple list of orderings that we will turn into a SQL `ORDER BY` clause.

-

-Let's get started.

-

-## Order By

-

-Just like with the `WHERE` clause last time, we will modify our SQL template to add a new `ORDER BY` clause, and

-delegate to a new function to generate the SQL for that new clause.

-

-```typescript

-const order_by_clause = request.query.order_by == null ? "" : `ORDER BY ${visit_order_by_elements(request.query.order_by.elements)}`;

-

-const sql = `SELECT ${fields.join(", ")} FROM ${request.collection} ${where_clause} ${order_by_clause} ${limit_clause} ${offset_clause}`;

-```

-

-In this case, our new helper function is called `visit_order_by_elements`, and it breaks down the `order_by` property

-of the query request.

-

-`visit_order_by_elements` processes a list of "order-by elements", each of which identifies an expression to order by,

-and a sort order - ascending or descending.

-

-Let's implement the new function.

-

-```typescript

-function visit_order_by_elements(values: OrderByElement[]): String {

- if (values.length > 0) {

- return values.map(visit_order_by_element).join(", ");

- } else {

- return "1";

- }

-}

-```

-

-The function makes a special case for zero elements, because otherwise we'd generate invalid SQL.

-

-Otherwise, it delegates to another function, `visit_order_by_element`, to generate the SQL for a single order-by

-element, and concatenates the results. This has the desired effect of implementing the lexicographical order, where

-the first order-by element takes precedence, the second acts as tie-breaker in case of equality, and so on.

-

-Now let's implement the `visit_order_by_element` function.

-

-```typescript

-function visit_order_by_element(value: OrderByElement): String {

- const direction = value.order_direction === 'asc' ? 'ASC' : 'DESC';

-

- switch (value.target.type) {

- case 'column':

-

- case 'single_column_aggregate':

-

- case 'star_count_aggregate':

- }

-}

-```

-

-Here we'll pattern match on the `value.target.type` property, which determines the type of expression that we need to

-evaluate. For now, we'll only implement sorting based on the simplest column expressions.

-

-For the other cases, we can throw an error:

-

-```typescript

-throw new NotSupported("order_by_aggregate is not supported");

-```

-

-In the column case, we only handle the case where `path` is empty, just like we did for predicates. When we implement

-relationships, we can come back and implement the general case here.

-

-```typescript

-if (value.target.path.length > 0) {

- throw new NotSupported("Relationships are not supported");

-}

-return `${value.target.name} ${direction}`;

-```

-

-Here's the full implementation of `visit_order_by_element`:

-

-```typescript

-function visit_order_by_element(element: OrderByElement): String {

- const direction = element.order_direction === 'asc' ? 'ASC' : 'DESC';

-

- switch (element.target.type) {

- case 'column':

- if (element.target.path.length > 0) {

- throw new NotSupported("Relationships are not supported");

- }

- return `${element.target.name} ${direction}`;

- case 'single_column_aggregate': case 'star_count_aggregate':

- throw new NotSupported("order_by_aggregate are not supported");

- }

-}

-```

-

-In this case, the generated SQL is simple: just the name of the column, followed by the sort direction.

-

-Actually, that's all that's needed to implement sorting. We can rebuild our connector and re-run the test suite to make

-sure that our new test cases are passing.

-

-Next up: aggregates.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates.md

deleted file mode 100644

index 433f8eb54..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates.md

+++ /dev/null

@@ -1,16 +0,0 @@

----

-title: "Predicates"

-metaTitle: 'Predicates | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-

-Let's dive deeper into enhancing our SQLite database connector by implementing predicates that convert into `WHERE`

-clauses in the generated SQL.

-

-Building on the foundation established in the previous session, this installment focuses on interpreting and translating

-the `where` property of the query request into meaningful SQL `WHERE` clauses.

-

-The tutorial demonstrates how to handle various predicate expressions, including logical expressions like `AND`, `OR`,

-and `NOT`, as well as comparison operator expressions. We emphasize the visitor design pattern for recursively building

-the `WHERE` clause and ensuring the correct handling of query parameters.

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/1-video.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/1-video.md

deleted file mode 100644

index aaa9c57be..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/1-video.md

+++ /dev/null

@@ -1,9 +0,0 @@

----

-title: "Video Walkthrough"

-metaTitle: 'Predicates | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-import YoutubeEmbed from "../../src/YoutubeEmbed.js";

-

-

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/2-where-clause.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/2-where-clause.md

deleted file mode 100644

index fef4bc627..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/2-where-clause.md

+++ /dev/null

@@ -1,50 +0,0 @@

----

-title: "Where Clause"

-metaTitle: 'Ordering | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-So now we've set up a basic data connector for a SQLite database running locally. Now we'll start to implement

-predicates by turning them into where clauses in the generated SQL.

-

-Predicates in GraphQL are expressions which determine the conditions under which data is retrieved or manipulated.

-For example: a `where` clause.

-

-## Where Clause

-

-Let's pick up from where we left off. We can modify our SQL template in our `fetch_rows` function to now include a

-`WHERE clause`:

-

-```typescript

-const sql = `SELECT ${fields.join(", ")} FROM ${request.collection} ${where_clause} ${limit_clause} ${offset_clause}`;

-```

-

-To generate our `WHERE` clause, we will need to interpret the contents of the `where` property of the query request. To

-see what this will look like, we can find some examples in the snapshots we generated last time:

-

-```JSON

-{

- "Limit": 10,

- "where": {

- "type": "binary_comparison_operator",

- "column": {

- "type": "column",

- "name": "artist_id",

- "path": []

- },

- "operator": {

- "type": "equal"

- },

- "value": {

- "type": "scalar",

- "value": 5

- }

- }

-}

-```

-

-This predicate expression has type `binary_comparison_operator`, which means it is a predicate which compares a column

-to a value using an operator, in this case, the equality operator - this predicate asserts that the `artist_id` column

-equals the literal value `5`.

-

-Let's look at other expression types in the next section.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/3-expression-types.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/3-expression-types.md

deleted file mode 100644

index 7619449bb..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/3-expression-types.md

+++ /dev/null

@@ -1,51 +0,0 @@

----

-title: "Expression Types"

-metaTitle: 'Expression Types | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-In the SDK, these predicate expressions are given the TypeScript type `Expression`, and we can see that there are

-several different types of expression. These are all expression types which can be used in the `where` clause of a

-query our `query` function will need to handle them via the `fetch_rows` function.

-

-```typescript

-export type Expression = {

- expressions: Expression[];

- type: "and";

-} | {

- expressions: Expression[];

- type: "or";

-} | {

- expression: Expression;

- type: "not";

-} | {

- column: ComparisonTarget;

- operator: UnaryComparisonOperator;

- type: "unary_comparison_operator";

-} | {

- column: ComparisonTarget;

- operator: BinaryComparisonOperator;

- type: "binary_comparison_operator";

- value: ComparisonValue;

-} | {

- column: ComparisonTarget;

- operator: BinaryArrayComparisonOperator;

- type: "binary_array_comparison_operator";

- values: ComparisonValue[];

-} | {

- in_collection: ExistsInCollection;

- type: "exists";

- where: Expression;

-};

-```

-

-There are logical expressions like `and`, `or`, and `not`, which serve to combine other simpler expressions.

-

-There are unary (eg: `NULL`, `IS NOT NULL`, etc...) and binary (eg: `=` (equal), `!=` (not-equal), `>` (greater-than),

-`<` (less-than), `>=` (greater-or-equal)) comparison operator expressions.

-

-And there are `exists` expressions, which are expressed using a sub-query against another collection.

-

-For now, we'll concentrate on logical expressions and comparison operator expressions.

-

-Let's begin to construct the where clause in the next section.

\ No newline at end of file

diff --git a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/4-building-the-where-clause.md b/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/4-building-the-where-clause.md

deleted file mode 100644

index 5591c1943..000000000

--- a/tutorials/backend/hasura-v3-ts-connector/tutorial-site/content/predicates/4-building-the-where-clause.md

+++ /dev/null

@@ -1,236 +0,0 @@

----

-title: "Building the 'where' clause"

-metaTitle: 'Building the Where clause | Hasura DDN Data Connector Tutorial'

-metaDescription: 'Learn how to build a data connector in Typescript for Hasura DDN'

----

-

-We're going to build up the `WHERE` clause recursively, starting with the simplest expressions at the leaves of the

-predicate expression tree, and working upwards. As we go, we will need to keep track of any query parameters that we

-also need to pass to SQLite, so let's make a place to store those.

-

-**In the `fetch_rows` function**, let's add a new variable to store our parameters:

-

-```typescript

-const parameters: any[] = [];

-```

-

-As we encounter literal values, we'll append them as new parameters to this list.

-

-We also need to add our parameters to the database fetch function call, and to our logging function:

-

-```typescript

-console.log(JSON.stringify({ sql, parameters }));

-

-return state.db.all(sql, ...parameters);

-```

-

-Let's delegate to a new helper function in order to build our `WHERE` clause. Let's call our function