This guide demonstrates:

- How to install IPEX-LLM for Intel NPU on Intel Core™ Ultra Processers (Series 2)

- Python and C++ APIs for running IPEX-LLM on Intel NPU

Important

IPEX-LLM currently only supports Windows on Intel NPU.

- Install Prerequisites

- Install

ipex-llmwith NPU Support - Runtime Configurations

- Python API

- C++ API

- Accuracy Tuning

Note

IPEX-LLM NPU support on Windows has been verified on Intel Core™ Ultra Processers (Series 2) with processor number 2xxV (code name Lunar Lake).

Important

If you have NPU driver version lower than 32.0.100.3104, it is highly recommended to update your NPU driver to the latest.

To update driver for Intel NPU:

-

Download the latest NPU driver

- Visit the official Intel NPU driver page for Windows and download the latest driver zip file.

- Extract the driver zip file

-

Install the driver

- Open Device Manager and locate Neural processors -> Intel(R) AI Boost in the device list

- Right-click on Intel(R) AI Boost and select Update driver

- Choose Browse my computer for drivers, navigate to the folder where you extracted the driver zip file, and select Next

- Wait for the installation finished

A system reboot is necessary to apply the changes after the installation is complete.

Note

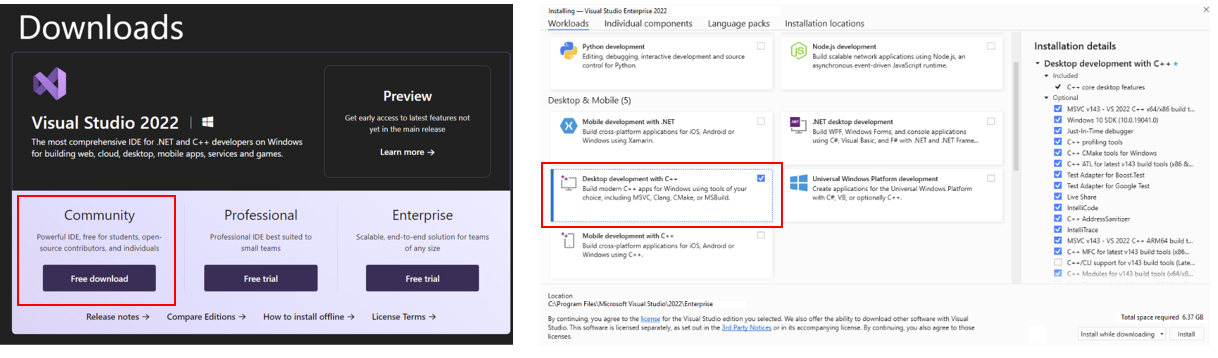

To use IPEX-LLM C++ API on Intel NPU, you are required to install Visual Studio 2022 on your system. If you plan to use the Python API, skip this step.

Install Visual Studio 2022 Community Edition and select "Desktop development with C++" workload:

Visit Miniforge installation page, download the Miniforge installer for Windows, and follow the instructions to complete the installation.

After installation, open the Miniforge Prompt, create a new python environment llm-npu:

conda create -n llm-npu python=3.11Activate the newly created environment llm-npu:

conda activate llm-npuTip

ipex-llm for NPU supports Python 3.10 and 3.11.

With the llm-npu environment active, use pip to install ipex-llm for NPU:

conda activate llm-npu

pip install --pre --upgrade ipex-llm[npu]For ipex-llm NPU support, set the following environment variable with active llm-npu environment:

set BIGDL_USE_NPU=1IPEX-LLM offers Hugging Face transformers-like Python API, enabling seamless running of Hugging Face transformers models on Intel NPU.

Refer to the following table for examples of verified models:

| Model | Model link | Example link |

|---|---|---|

| LLaMA 2 | meta-llama/Llama-2-7b-chat-hf | link |

| LLaMA 3 | meta-llama/Meta-Llama-3-8B-Instruct | link |

| LLaMA 3.2 | meta-llama/Llama-3.2-1B-Instruct, meta-llama/Llama-3.2-3B-Instruct | link |

| Qwen 2 | Qwen/Qwen2-1.5B-Instruct, Qwen/Qwen2-7B-Instruct | link |

| Qwen 2.5 | Qwen/Qwen2.5-3B-Instruct, Qwen/Qwen2.5-7B-Instruct | link |

| MiniCPM | openbmb/MiniCPM-1B-sft-bf16, openbmb/MiniCPM-2B-sft-bf16 | link |

| Baichuan 2 | baichuan-inc/Baichuan2-7B-Chat | link |

| MiniCPM-Llama3-V-2_5 | openbmb/MiniCPM-Llama3-V-2_5 | link |

| MiniCPM-V-2_6 | openbmb/MiniCPM-V-2_6 | link |

| Bce-Embedding-Base-V1 | maidalun1020/bce-embedding-base_v1 | link |

| Speech_Paraformer-Large | iic/speech_paraformer-large-vad-punc_asr_nat-zh-cn-16k-common-vocab8404-pytorch | link |

Tip

You could refer to here for full IPEX-LLM examples on Intel NPU.

IPEX-LLM also provides C++ API for running Hugging Face transformers models.

Refer to the following table for examples of verified models:

| Model | Model link | Example link |

|---|---|---|

| LLaMA 2 | meta-llama/Llama-2-7b-chat-hf | link |

| LLaMA 3 | meta-llama/Meta-Llama-3-8B-Instruct | link |

| LLaMA 3.2 | meta-llama/Llama-3.2-1B-Instruct, meta-llama/Llama-3.2-3B-Instruct | link |

| Qwen 2 | Qwen/Qwen2-1.5B-Instruct, Qwen/Qwen2-7B-Instruct | link |

| Qwen 2.5 | Qwen/Qwen2.5-3B-Instruct, Qwen/Qwen2.5-7B-Instruct | link |

| MiniCPM | openbmb/MiniCPM-1B-sft-bf16, openbmb/MiniCPM-2B-sft-bf16 | link |

Tip

You could refer to here for full IPEX-LLM examples on Intel NPU.

IPEX-LLM provides several optimization methods for enhancing the accuracy of model outputs on Intel NPU. You can select and combine these techniques to achieve better outputs based on your specific use case.

You could set environment variable IPEX_LLM_NPU_QUANTIZATION_OPT=1 before loading & optimizing the model with from_pretrained function from ipex_llm.transformers.npu_model Auto Model class to further enhance model accuracy of low-bit models.

When loading & optimizing the model with from_pretrained function of ipex_llm.transformers.npu_model Auto Model class, you could try to set parameter mixed_precision=True to enable mixed precision optimization when encountering output problems.

IPEX-LLM low-bit optimizations support both channel-wise and group-wise quantization on Intel NPU. When loading & optimizing the model with from_pretrained function of Auto Model class from ipex_llm.transformers.npu_model, parameter quantization_group_size will control whether to use channel-wise or group-wise quantization.

If setting quantization_group_size=0, IPEX-LLM will use channel-wise quantization. If setting quantization_group_size=128, IPEX-LLM will use group-wise quantization with group size 128.

You could try to use group-wise quantization for better outputs.