-

Notifications

You must be signed in to change notification settings - Fork 291

Description

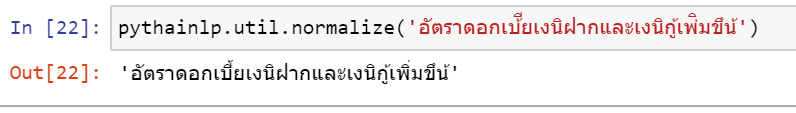

Consider the following example:

Current PythaiNLP's text normalization relies heavily on rules, which are sufficient in some circumstances. However, consider the following example, it considerably fails

Input: อัตราดอกเบ้ียเงนิฝากและเงนิกู้เพ่ิมขึน้

Expect: อัตราดอกเบี้ยเงินฝากและเงินกู้เพิ่มขึ้น

Would it be possible if we can train a ML model for text normalization? I think the approach is similar to what we did for thai2rom, which is a seq2seq model.

Speaking about training data, we might develop a probabilistic model that perturbed a given word according to to some rules, e.g. สระลอย. So, we can use it to generate the training data for our seq2seq normalization model.

From what I can see, consider that many Thai official documents are in PDF, this model will be very useful for preprocessing results from PDF parsing, which is typically not robust for cases such as สระลอย.

@c4n : do you think we can leverage what you've developed for https://github.com/c4n/Thai-Adversarial-Evaluation here?