In this directory, you will find examples on how you could apply IPEX-LLM INT4 optimizations on Qwen-VL models on Intel GPUs. For illustration purposes, we utilize the Qwen/Qwen-VL-Chat as a reference Qwen-VL model.

To run these examples with IPEX-LLM on Intel GPUs, we have some recommended requirements for your machine, please refer to here for more information.

In the example chat.py, we show a basic use case for a Qwen-VL model to start a multimodal chat using chat() API, with IPEX-LLM INT4 optimizations on Intel GPUs.

We suggest using conda to manage environment:

conda create -n llm python=3.11

conda activate llm

# below command will install intel_extension_for_pytorch==2.1.10+xpu as default

pip install --pre --upgrade ipex-llm[xpu] --extra-index-url https://pytorch-extension.intel.com/release-whl/stable/xpu/us/

pip install "transformers<4.37.0"

pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generationWe suggest using conda to manage environment:

conda create -n llm python=3.11 libuv

conda activate llm

# below command will install intel_extension_for_pytorch==2.1.10+xpu as default

pip install --pre --upgrade ipex-llm[xpu] --extra-index-url https://pytorch-extension.intel.com/release-whl/stable/xpu/us/

pip install "transformers<4.37.0"

pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generationNote

Skip this step if you are running on Windows.

This is a required step on Linux for APT or offline installed oneAPI. Skip this step for PIP-installed oneAPI.

source /opt/intel/oneapi/setvars.shFor optimal performance, it is recommended to set several environment variables. Please check out the suggestions based on your device.

For Intel Arc™ A-Series Graphics and Intel Data Center GPU Flex Series

export USE_XETLA=OFF

export SYCL_PI_LEVEL_ZERO_USE_IMMEDIATE_COMMANDLISTS=1

export SYCL_CACHE_PERSISTENT=1For Intel Data Center GPU Max Series

export LD_PRELOAD=${LD_PRELOAD}:${CONDA_PREFIX}/lib/libtcmalloc.so

export SYCL_PI_LEVEL_ZERO_USE_IMMEDIATE_COMMANDLISTS=1

export SYCL_CACHE_PERSISTENT=1

export ENABLE_SDP_FUSION=1Note: Please note that

libtcmalloc.socan be installed byconda install -c conda-forge -y gperftools=2.10.

For Intel iGPU

export SYCL_CACHE_PERSISTENT=1For Intel iGPU and Intel Arc™ A-Series Graphics

set SYCL_CACHE_PERSISTENT=1Note

For the first time that each model runs on Intel iGPU/Intel Arc™ A300-Series or Pro A60, it may take several minutes to compile.

python ./chat.py

Arguments info:

--repo-id-or-model-path REPO_ID_OR_MODEL_PATH: argument defining the huggingface repo id for the Qwen-VL model (e.gQwen/Qwen-VL-Chat) to be downloaded, or the path to the huggingface checkpoint folder. It is default to be'Qwen/Qwen-VL-Chat'.--n-predict N_PREDICT: argument defining the max number of tokens to predict. It is default to be32.

In every session, image and text can be entered into cmd (user can skip the input by type 'Enter') ; please type 'exit' anytime you want to quit the dialouge.

Every image output will be named as the round of session and placed under the current directory.

-------------------- Session 1 --------------------

Please input a picture: http://farm6.staticflickr.com/5268/5602445367_3504763978_z.jpg

Please enter the text: 这是什么?

---------- Response ----------

这是一张图片,展现了一个穿着粉色条纹连衣裙的小女孩,她手持一只穿粉色裙子的小熊。这个场景发生在一个户外环境,有砖块背景墙和花朵。

-------------------- Session 2 --------------------

Please input a picture:

Please enter the text: 这个小女孩多大了?

---------- Response ----------

根据图片中的描述,这个小女孩应该是年龄较小的孩子,但具体年龄难以确定。从她的外表来看,可能是在5岁左右。。

-------------------- Session 3 --------------------

Please input a picture:

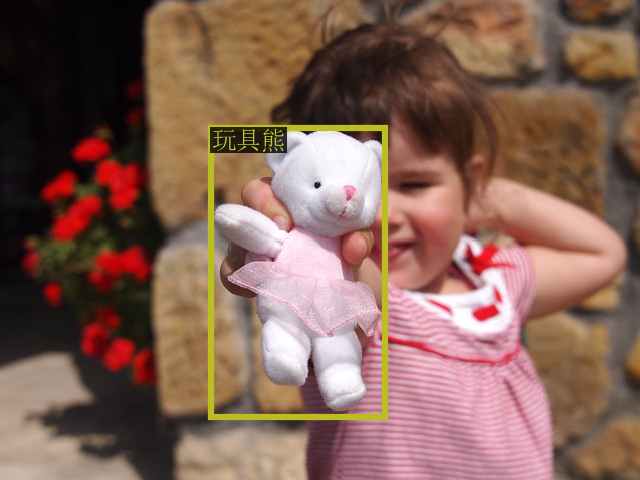

Please enter the text: 在图中检测框出玩具熊

---------- Response ----------

<ref>玩具熊</ref><box>(330,267),(603,869)</box>

-------------------- Session 4 --------------------

Please input a picture: exit

The sample input image in Session 1 is (which is fetched from COCO dataset):

The sample output image in Session 3 is: